-

Title

-

Enabling Improvements: Combining Intervention and Implementation Research

-

Author

-

Schober, Barbara

-

Spiel, Christiane

-

Research Area

-

Special Areas of Interdisciplinary Study

-

Topic

-

Applications of Social Science Knowledge to Policy

-

Abstract

-

Transferring evidence‐based intervention programs effectively into practice and into the wider field of public policy often fails, even if the logic of evidence‐based approaches has become highly important in recent years. As a consequence, the field of implementation research has emerged, implementation frameworks have been developed, and implementation studies have been conducted. However, even if intervention research and implementation research have both achieved mentionable progress in the past, they are rather unrelated, and different traditions and research groups are involved. This might be one of the key reasons why there are still many problems in transferring evidence‐based programs into widespread community. In order to enable improvement in this field, in this essay, we argue for a systematic integration of intervention and implementation research as a promising emerging approach. Therefore, we recommend a six‐step procedure requiring researchers to design and develop intervention programs using a field‐oriented and participative approach from the beginning on. In particular, the perspective of policymakers has to be included as well as the wider context of values, rewarding systems, and basic attitudes in science.

-

Related Essays

-

The Role of Data in Research and Policy (Sociology), Barbara A. Anderson

-

Can Public Policy Influence Private Innovation? (Economics), James Bessen

-

To Flop Is Human: Inventing Better Scientific Approaches to Anticipating Failure (Methods), Robert Boruch and Alan Ruby

-

Meta‐Analysis (Methods), Larry V. Hedges and Martyna Citkowicz

-

Misinformation and How to Correct It (Psychology), John Cook et al.

-

Youth Entrepreneurship (Psychology), William Damon et al.

-

Expertise (Sociology), Gil Eyal

-

Controlling the Influence of Stereotypes on One's Thoughts (Psychology), Patrick S. Forscher and Patricia G. Devine

-

Setting One's Mind on Action: Planning Out Goal Striving in Advance (Psychology), Peter M. Gollwitzer

-

The Evidence‐Based Practice Movement (Sociology), Edward W. Gondolf

-

How Brief Social‐Psychological Interventions Can Cause Enduring Effects (Sociology), Dushiyanthini (Toni) Kenthirarajah and Gregory M. Walton

-

Quasi‐Experiments (Methods), Charles S. Reichardt

-

Causation, Theory, and Policy in the Social Sciences (Sociology), Mark C. Stafford and Daniel P. Mears

-

The Social Science of Sustainability (Political Science), Johannes Urpelainen

-

Translational Sociology (Sociology), Elaine Wethington

-

Person‐Centered Analysis (Methods), Alexander von Eye and Wolfgang Wiedermann

-

Identifier

-

etrds0412

-

extracted text

-

Enabling Improvements: Combining

Intervention and Implementation

Research

BARBARA SCHOBER and CHRISTIANE SPIEL

Abstract

Transferring evidence-based intervention programs effectively into practice and

into the wider field of public policy often fails, even if the logic of evidence-based

approaches has become highly important in recent years. As a consequence, the field

of implementation research has emerged, implementation frameworks have been

developed, and implementation studies have been conducted. However, even if

intervention research and implementation research have both achieved mentionable

progress in the past, they are rather unrelated, and different traditions and research

groups are involved. This might be one of the key reasons why there are still many

problems in transferring evidence-based programs into widespread community. In

order to enable improvement in this field, in this essay, we argue for a systematic

integration of intervention and implementation research as a promising emerging

approach. Therefore, we recommend a six-step procedure requiring researchers to

design and develop intervention programs using a field-oriented and participative

approach from the beginning on. In particular, the perspective of policymakers has

to be included as well as the wider context of values, rewarding systems, and basic

attitudes in science.

INTRODUCTION

With regard to an enormous amount of unsolved problems and demands of

the practice—not least in social contexts—a transfer of existing knowledge

and evidence from science into practice is a prominent issue. Typical

areas providing know-how that could rather directly contribute to an

optimization of the practice are, for example, the educational context and

the field of health. However, transferring scientific evidence and respective

intervention programs sustainably into practice and into the wider field of

public policy seems difficult and often does not work. As a consequence, a

new field of research has emerged: implementation science. Within this field

of research, implementation frameworks have been developed (Meyers,

Emerging Trends in the Social and Behavioral Sciences.

Robert Scott and Marlis Buchmann (General Editors) with Stephen Kosslyn (Consulting Editor).

© 2016 John Wiley & Sons, Inc. ISBN 978-1-118-90077-2.

1

�2

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

Durlak, & Wandersmann, 2012) and numerous implementation studies

have been conducted, showing for example that an active, accompanied,

long-term, and multilevel implementation approach is much more effective

than traditional forms of dissemination (Ogden & Fixsen, 2014). However,

there are still many challenges in the field of transferring evidence-based

programs into widespread community practice.

There are various reasons for these problems that could and should be

taken into account, but one very important structural constraint seems

to be rather obvious: So far, intervention research and implementation

research are not systematically connected and many different traditions and

research groups are involved. Implementation research is often mandated

and financed by parties that do not belong to the scientific community and

therefore remains rather isolated. Sometimes, it is even considered to be less

scientifically valuable than research that develops new interventions (Fixsen,

Blase, & van Dyke, 2011). This lack of anchoring of this new discipline might

be one of the key reasons why the efforts of implementation science are

not sufficiently effective so far. On the basis of this assumption, we argue

for a systematic integration of intervention and implementation research.

To realize this, we propose a six-step procedure that requires researchers to

design and develop intervention programs based on a field-oriented and

participative approach from the very beginning on. This means that the successful transfer of evidence into practice—and especially of evidence-based

intervention programs into public policy—should become more likely, if we

leave the perspective of transferring a program to practitioners just at the

end of the research process. We propose to systematically consider the needs

of the field within the whole conceptualization of an intervention as well

as during its evaluation and implementation. In this essay, we present the

baselines of such an approach and discuss its demands as a promising trend

in science (see also Spiel, Schober, & Strohmeier, 2016).

PROGRESS AND LIMITATIONS OF EVIDENCE-BASED

INTERVENTIONS AND IMPLEMENTATION RESEARCH IN THE PAST

DECADES

In the past decades, the evidence-based movement has significantly gained

impact. Especially, in Anglo-American contexts, a lot of effort was put into

making better use of research-based programs in human service areas such as

medicine, child welfare, and health (Fixsen, Blase, Naoom, & Wallace, 2009;

Spiel, 2009). A reason for this trend toward evidence-based measures might

be the massive increase in social challenges that results in the need for proven

measures to cope with them. In turn, this lack of evidence-based measures in

�Enabling Improvements: Combining Intervention and Implementation Research

3

this field points out the necessity of transferring relevant existing scientific

knowledge and evidence into practice.

One part of the growing evidence-based movement so far was to ensure

good standards of evidence, which is obviously an important prerequisite for

bringing prevention or intervention programs in the field. For example, the

Society for Prevention Research has provided standards to assist practitioners, policymakers, and administrators in determining which interventions

are efficacious, which are effective, and which are ready for dissemination

(for details, see Flay et al., 2005). The common ground of these standards is

the fact that evidence-based programs are defined by the research methodology used to evaluate them, and the definition of randomized trials as the

gold standard for evidence-based measures (Fixsen et al., 2009).

However, standards alone cannot ensure a transfer of evidence into practice; they are just one aspect of a complex process. Therefore, by focusing

on developing and differentiating criteria, the evidence-based practice

movement so far has not provided the intended benefits—at least not to

its presumably possible extent. Implementation and transfer of scientific

knowledge into practice and in the wider range of public policy has often

even failed (Fixsen et al., 2009). One important factor was that although

program evaluation became a more and more obligatory part in a many

initiatives, it was often lacking a specific and explicit study and enhancement

of the implementation processes. This was acknowledged as fundamental

deficit, based on the insight that an active, long-term, multilevel implementation approach is far more effective than passive forms of dissemination

(Ogden & Fixsen, 2014).

As a consequence, the field of implementation research has emerged (Rossi

& Wright, 1984). Fixsen, Naoom, Blase, Friedman, and Wallace (2005, p. 5)

defined implementation as the “specific set of activities designed to put into

practice an activity or program of known dimensions.” Consequently, implementation science has been defined as “the scientific study of methods to

promote the systemic uptake of research findings and evidence-based practices into professional practice and public policy” (Forman et al., 2013, p. 80).

Implementation science has grown impressively within the last years, several theoretical models and frameworks have been published and numerous

studies have been conducted. However, despite all these efforts within the

field of implementation science, there is an understanding among researchers

that the empirical support for evidence-based implementation is insufficient

so far (Ogden & Fixsen, 2014). Although there is a large body of empirical

evidence concerning the importance of implementation and growing knowledge of the contextual factors that can influence implementation, knowledge

of how to systematically increase the likelihood of high-quality implementation is still unsatisfactory (for a review, see e.g., Meyers et al., 2012).

�4

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

What are the reasons for this lack of success for this very promising

approach? On the one hand, implementation science is a very young field of

research. It exists since only some decades with a rather new focus on complex questions of interventions and evaluations and beyond. However, even

if things just need more time to come to action and to bring visible effects,

one impeding and very basic structural deficit or obstacle can be identified:

intervention research and implementation research are rather separated

and joint activities are rare (see e.g., Forman et al., 2013). Scientific intervention research and the connected activities often refer to the theory-driven

development and provision of a prevention or intervention program for

clients. Mostly voluntarily, highly motivated people or institutions (e.g.,

schools) realize the program within a clearly defined period of time. Such

programs are often evaluated within a standard evaluation design (e.g., the

comparison of different measurement groups, pre-post-follow-up designs,

focusing on different levels of effect). The evaluation focuses on questions

such as the following: Does the program work within optimal conditions?

Why does it work? Do the effects persist in the long run? Often, the work of

the respective research projects is considered to be done after investigating

these questions.

On the other hand, implementation research activity often starts just

then and works with already existing programs. It refers to actions taken

within the organizational setting to ensure that the intervention delivered to

clients is complete and appropriate—as only then the assumed effects can be

assured. Therefore, the focus is on the specific conditions of the field, in which

a measure is conducted and on the needs and competences of all stakeholders involved. Typical issues can be as follows: How to ensure the readiness

of an organization for the implementation of a program, for example, in

the sense of (sufficient) staff capacity? How is it possible to provide the

staff with the required competences effectively? Why do proven programs

sometimes exhibit unintended effects when realized in a specific setting?

Often, different research groups with different research traditions are

involved in these two tasks. Beyond, different funding structures and a

different status in science can be identified: intervention researchers are often

specialists in certain fields of health or education, funding their research

within classic scientific structures. Presently, implementation researchers

are mostly given mandates by politicians to take on the implementation

of already existing interventions. Furthermore, implementation research is

very difficult realize within the constraints of university research environments (e.g., owing to time or financial constraints) and is sometimes even

considered to be less scientifically valuable (Fixsen et al., 2011).

This presently prevailing separation of intervention and implementation

research leads to gaps within a coherent improvement process and might

�Enabling Improvements: Combining Intervention and Implementation Research

5

be the reason for diverse barriers for a successful transfer of scientific

knowledge to practice. Consequently, we suggest a systematic integration

of the two approaches. Researchers should systematically design and

develop intervention programs using a fundamentally field-oriented and

participative approach [according to the concept of use-inspired basic

research by Stokes (1997)]. This means that the specific needs of the field

and the involved stakeholders should not only be considered in the process

of implementation, transfer or scaling up, but also as part of the whole conceptualization and evaluation of an intervention (Spiel, Schober, Strohmeier,

& Finsterwald, 2011). Consequently, an intervention, its evaluation, and

implementation should be developed in an integrative way. In order to

realize this and to avoid as much presumable risks as possible, the perspective of stakeholders on all relevant levels should be included. Especially,

in fields such as education or health, the perspective of policymakers has

to be integrated explicitly and analyses of supporting or hindering factors

of evidence-based policy need to be included (Davies, 2012; Spiel et al.,

2011). Unquestionably, several researchers would argue that they already

work with these ideas in mind, but a systematic approach is missing so far.

On the basis of this diagnosis, we propose an approach for the systematic

integration of intervention and implementation research in the following

section.

A FRAMEWORK FOR AN INTEGRATION OF INTERVENTION

AND IMPLEMENTATION RESEARCH—SOME CORNERSTONES OF A

NEW APPROACH

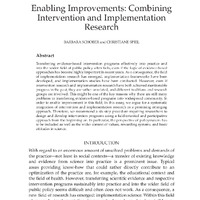

Combining theoretical and empirical knowledge from prior research (Glasgow, Vogt, & Boles, 1999; Greenhalgh, Robert, MacFarlane, Bate, & Kyriakidou, 2004) with the arguments and desiderates described earlier, we consider

at least six deliberate parameters as constitutive components of an integrative

framework of intervention and implementation research (Figure 1). These

parameters can be considered as steps, as they mostly will occur in succession and at least partly build upon each other, even if some of them can and

might be performed simultaneously. The six steps, respectively, parameters

together must be considered as parts of a dynamic process with many subprocesses, feedback loops, and interdependencies (Spiel et al., 2016).

1. Identifying Desiderates for Research with a Focus on Social Responsibility.

In the case of an integrative approach to intervention/prevention and

implementation research, the basic step is to consciously pay attention

to relevant research topics in this context. Consequently, within this

approach, researchers working on topics relevant for interventions and

�Figure 1 Constitutive components of an integrative framework of intervention and implementation research.

Identifying desiderates for research with a focus on social responsibility

Ensuring enough valid knowledge on how to handle the problem

Identifying promising starting points for actions

Cooperating with all stakeholders

in a stable and sustainable manner

Developing measures and

their implementation together

Scaling

up the

program implementation

6

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

�Enabling Improvements: Combining Intervention and Implementation Research

7

for changes in practice should not primarily focus on research desiderates and problems arising from basic research but also on (especially

social) problems in society. This needs the basic attitude of being a

mission-driven researcher, in addition to following a curiosity-driven

approach that is widespread and highly respected in the scientific

community. Therefore, we have to deliberately extend our focus in the

process of identification of valuable research topics and combine quests

for fundamental understanding with a consideration of practical use

(Stokes, 1997). In other words, if scientists intend to contribute to this

field of research, the first step requires sociopolitical responsibility as a

basic mind-set.

2. Ensuring Enough Valid Knowledge on How to Handle the Problem. A second

decisive prerequisite for any kind of transfer is the availability of robust

and sound scientific knowledge (Spiel, Lösel, & Wittmann, 2009).

Reliable research of high scientific quality is needed—with regard

to theory and evidence. Effective interventions and evidence-based

actions in general must be based on enough reliable insights into, for

example, causal mechanisms and connections. This by no means is an

easy demand, especially if we have a look at fields such as education

or health. Just a quick glance on topics such as, for example, students’

motivation in school and how to enhance it leads to a wide body

of literature with some central and undoubted insights, but also to

still many open questions. Consequently, researchers working at the

interface between intervention and implementation have to be experts

in their fields with excellent knowledge of theory, methods, empirical

findings, and limitations.

3. Identifying Promising Starting Points for Actions. The identification of a

desiderate or problem and the availability of relevant insights for initiating changes are still not enough if one does not succeed in identifying concrete and promising starting points for interventions and their

implementation with regard to the prevailing conditions and system

characteristics. This must be emphasized, as a wide body of research

has made clear that many intervention programs and measures do not

work in any case and not at all times (Meyers et al., 2012). Here again,

a necessary condition is high expertise in the relevant scientific field.

However, this must be combined with a differentiated view on prevailing cultural and political conditions. Therefore, researchers who want to

successfully integrate intervention and implementation research need

knowledge and experience in the relevant practical field and its contextual conditions—including knowledge about potential problems and

limitations.

�8

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

4. Cooperating with all Stakeholders in a Stable and Sustainable Manner.

In order to conduct integrative intervention and implementation

research, stable alliances with all stakeholders and especially with

the relevant policymakers are necessary. However, such connections

and working structures are traditionally not established between

science, practice, and politics. Research mostly follows its own, very

intrinsic logic, which often clearly differs from necessities of the practice

and from political thinking. Therefore, a very deliberate process of

establishing cooperation and building alliances is necessary. Among

other things, this includes more awareness of policymakers’ scope of

action. Researchers in this field have to consider that there are decisive

influences on government and policy, beyond evidence. These include

values, beliefs, and ideology, which are the driving forces of many

political processes. Researchers have to keep in mind that policymaking

is highly embedded in a bureaucratic culture and is forced to respond

quickly to everyday contingencies and to often very limited resources

(Davies, 2012). Consequently, researchers have to find ways to integrate

the relevance of evidence within the context of all these influencing

factors. However, this step surely sometimes is burdensome and an

unfamiliar demand for many researchers. However, it is a crucial one

and again addresses a certain basic attitude of researchers: it requires

that researchers make their voice heard.

5. Developing Measures and Their Implementation Together. On the basis of

the four above-described steps, which in fact build the prerequisites

for this fifth one, a coordinated development and implementation of

evidence-based measures can be performed in a theory-driven, ecological, collaborative, and participatory way. This means that researchers,

who want to realize an integrative intervention and implementation

research, have to include the perspectives of all relevant stakeholders

(practitioners, policymakers, government officials, public servants,

and communities) in this development process, communicate in the

language of these diverse stakeholders and meet them as equals.

Therefore, researchers again have to consider parameters for their

research work that differs from many traditional approaches: working

together right from the beginning is not common in many fields and

also requires new conceptions of, for example, research planning

(regarding things such as the duration of project phases; see Meyers

et al., 2012). Here, one big challenge surely is to find a balance between

considering manifold needs and realize a wide participation but also

maintain scientific criteria and standards of evidence. Consequently,

researchers must have theoretical knowledge and practical experience

in their very specific field of expertise, but the required profile for a

�Enabling Improvements: Combining Intervention and Implementation Research

9

successful “integrative intervention and implementation researcher”

obviously is much wider.

6. Scaling up the Program Implementation. The final scaling up step is a

classic topic of implementation research as we know it so far. Several

fruitful models and guidelines have been proposed here, such as

Meyers et al. (2012) made evident in their review consisting of 25

frameworks. They found 14 central dimensions within these frameworks and grouped them into thematic areas: (i) assessment strategies,

(ii) decisions about adaptation, (iii) capacity-building strategies, (iv)

creating a structure for implementation, (v) ongoing implementation

support strategies, and (vi) improving future applications. According to

their synthesis, the implementation process consists of a temporal series

of these interrelated steps, which are critical to quality implementation

(see also Spiel et al., 2016).

However, different to prior concepts, in our integrative approach, the necessities and stakeholders for a scaling up are claimed to be taken into account

from beginning on.

CONCLUSIONS AND FUTURE PERSPECTIVES FOR COMBINING

INTERVENTION AND IMPLEMENTATION RESEARCH

In this essay, we propose the systematic integration of intervention and

implementation research as a promising and necessary trend for future

research. From our perspective, such an integration has the potential to

enable large-scale improvements as it supports the direct transfer of scientific knowledge to practice. However, why is this a new approach, as on

the surface, the steps seem self-evident? Furthermore, what are the special

demands for enabling future improvement based on such an approach?

Regarding the first question, one must say that obviously, most, if not

all components (both within and across the six steps), are already known

and have been considered in intervention and implementation research.

However, the new and demanding challenge is our postulation of bringing

them together in an integrative and coordinated way, in order to achieve

success. The abovedescribed approach represents a very basic but also a

very systematic research concept, which is more than purely the sum of its

steps—ignoring one aspect changes the whole dynamic. In addition, the

sound, consistent integration of intervention and implementation research

as described earlier also requires a (re)differentiation of our scientific identity

and the creation of a new, wider job description for researchers in this field.

The conceptual necessity of a basic integration directly leads to the second

question about the future demands. As it became evident above, combining

�10

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

intervention and implementation research is very demanding. Therefore,

the appropriate acknowledgement in the scientific community is essential.

Science must change its very established “provision logic” and consequently,

individual researchers cannot be the only ones engaging in this kind of

research. Universities also have to include it in their mission. We therefore

strongly recommend a discussion of success criteria in academia (Fixsen

et al., 2011). The social responsibilities of academics and universities, respectively, have to be considered more deeply. The current gratification system

in science is more oriented to basic than to applied research. Mission-driven

research picking up problems in society presently is less financed and

noticed. Consequently, the number of researchers engaged in this field is

limited—even if in the last years some advances became obvious.

All in all, some systematic changes in concepts, attitudes, and valuing structures must be considered, and the stakeholders within the action triangle

of science, practice, and policy must come to a culture of appreciation and

professionalized communication. The positive starting point is that a lot of

knowledge already exists. However, the success of effective intervention and

implementation research in the following years will depend on how we succeed in bringing a joined engagement to take social responsibility for outcomes beyond pure scientific indices or short-term political success to action.

REFERENCES

Davies, P. (2012). The state of evidence-based policy evaluation and its role in

policy formation. National Institute Economic Review, 219(1), 41–52. doi:10.1177/

002795011221900105.

Fixsen, D. L., Blase, K. A., Naoom, S. F., & Wallace, F. (2009). Core implementation components. Research on Social Work Practice, 19(5), 531–531.

doi:10.1177/1049731509335549.

Fixsen, D. L., Blase, K., & Van Dyke, M. (2011). Mobilizing communities for implementing evidence-based youth violence prevention programming: A Commentary. American Journal of Community Psychology (Special Issue), 48(1–2), 133–137.

doi:10.1007/s10464-010-9410-1.

Fixsen, D. L., Naoom, S. F., Blase, K. A., Friedman, R. M., & Wallace, F. (2005).

Implementation research: A synthesis of the literature. Tampa, FL: University of

South Florida, Louis de la Parte Florida Mental Health Institute, National

Implementation Research Network (FMHI Publication No. 231). Retrieved from

http://nirn.fpg.unc.edu/resources/implementation-research-synthesis-literature.

Flay, B. R., Biglan, A., Boruch, R. F., Castro, F. G., Gottfredson, D., Kellam, S., … Ji,

P. (2005). Standards of evidence: Criteria for efficacy, effectiveness and dissemination. Prevention Science, 6(3), 151–175. doi:10.1007/s11121-005-5553.

Forman, S. G., Shapiro, E. S., Codding, R. S., Gonzales, J. E., Reddy, L. A., Rosenfield,

S. A., … Stoiber, K. C. (2013). Implementation Science and School Psychology.

School Psychology Quarterly, 28(2), 77–100. doi:10.1037/spq0000019.

�Enabling Improvements: Combining Intervention and Implementation Research

11

Glasgow, R. E., Vogt, T. M., & Boles, S. M. (1999). Evaluating the public health impact

of health promotion interventions: The RE-AIM framework. American Journal of

Public Health, 89(9), 1322–1327. doi:10.2105/AJPH.89.9.1322.

Greenhalgh, T., Robert, G., Macfarlane, F., Bate, P., & Kyriakidou, O. (2004). Diffusion

of innovations in service organizations: Systematic review and recommendations.

The Milbank Quarterly, 82(4), 581–629. doi:10.1111/j.0887-378X.2004.00325.x.

Meyers, D. C., Durlak, J. A., & Wandersmann, A. (2012). The quality implementation

framework: A synthesis of critical steps in the implementation process. American

Journal of Community Psychology, 50(3–4), 462–480. doi:10.1007/s10464-012-9522-x.

Ogden, T., & Fixsen, D. L. (2014). Implementation science: A brief overview

and a look ahead. Zeitschrift für Psychologie, 222(1), 4–11. doi:10.1027/21512604/a000160.

Rossi, P. H., & Wright, J. D. (1984). Evaluation research: An assessment.

Annual Review of Sociology, 10, 331–352. Retrieved from http://www.jstor.org/

stable/2083179?seq=1#page_scan_tab_contents.

Spiel, C. (2009). Evidence-based practice: A challenge for European developmental psychology. European Journal of Developmental Psychology, 6(1), 11–33.

doi:10.1080/17405620802485888.

Spiel, C., Lösel, F., & Wittmann, W. W. (2009). Transfer psychologischer Erkenntnisse – eine notwendige, jedoch schwierige Aufgabe [Transfer psychological findings – a necessary but difficult task]. Psychologische Rundschau, 60(4), 257–258.

doi:10.1026/0033-3042.60.4.257.

Spiel, C., Schober, B., Strohmeier, D., & Finsterwald, M. (2011). Cooperation among

researchers, policy makers, administrators, and practitioners: Challenges and recommendations. ISSBD Bulletin, 2(60), 11–14.

Spiel, C., Schober, B., & Strohmeier, D. (2016). Implementing intervention

research into public policy – the “I3 -approach”. Prevention Science, 1–10.

doi:10.1007/s11121-016-0638-3.

Stokes, D. E. (1997). Pasteur’s quadrant. Basic science and technological innovation. Washington, D.C.: Brookings Institution Press.

FURTHER READING

Fixsen, D. L., Blase, K., Metz, A., & Van Dyke, M. (2013). Statewide implementation

of evidence-based programs. Exceptional Children (Special Issue), 79(2), 213–230.

doi:10.1177/001440291307900206.

Fixsen, D. L., Naoom, S. F., Blase, K. A., Friedman, R. M., & Wallace, F. (2005).

Implementation research: A synthesis of the literature. Tampa, FL: University of

South Florida, Louis de la Parte Florida Mental Health Institute, National

Implementation Research Network (FMHI Publication No. 231). Retrieved from

http://nirn.fpg.unc.edu/resources/implementation-research-synthesis-literature

Meyers, D. C., Durlak, J. A., & Wandersmann, A. (2012). The Quality Implementation

Framework: A Synthesis of Critical Steps in the Implementation Process. American

Journal of Community Psychology, 50(3–4), 462–480. doi:10.1007/s10464-012-9522-x.

�12

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

Spiel, C., Schober, B., Strohmeier, D., & Finsterwald, M. (2011). Cooperation among

Researchers, Policy Makers, Administrators, and Practitioners: Challenges and

Recommendations. ISSBD Bulletin 2011, 2(60), 11–14.

Spiel, C., Schober, B., & Strohmeier, D. (2016). Implementing Intervention

Research into Public Policy – the “I3 -Approach”. Prevention Science, 1–10.

doi:10.1007/s11121-016-0638-3.

BARBARA SCHOBER SHORT BIOGRAPHY

Barbara Schober is the cochair of the faculty research topic “Promotion

of lifelong learning in the educational system.” She is a member of the

management board of the Austrian Psychological Society, the senate of

the University, and international scientific and advisory boards of research

projects and journals. She follows a mission-driven research approach within

the field of “Bildung-Psychology” and is the project (co)leader of diverse

third-party-funded research projects and the (co)author of over numerous

international publications and presentations. Her research focuses on

lifelong learning, learning motivation, self-regulation, gender differences

in educational contexts, teacher training, development and evaluation of

intervention programs, and implementation research.

CHRISTIANE SPIEL SHORT BIOGRAPHY

Christiane Spiel is and has been the chair and a member of various

international advisory and editorial boards as, for example, president

of the European Society for Developmental Psychology, president of the

Austrian Psychology Association, and president of the DeGEval—Society

for Evaluation (in Germany and Austria). She was the founding dean of the

faculty of psychology at the University of Vienna and is the vice-chair of the

board of directors of the Wuppertal University in Germany. Currently, she

is one of the key authors of the International Panel on Social Progress and

member of the board of directors of the Global Implementation Initiative.

Her research topics are on bullying and victimization, lifelong learning,

integration in multicultural school classes, evaluation and intervention research, implementation science, and quality management in the

educational system.

RELATED ESSAYS

The Role of Data in Research and Policy (Sociology), Barbara A. Anderson

Can Public Policy Influence Private Innovation? (Economics), James Bessen

To Flop Is Human: Inventing Better Scientific Approaches to Anticipating

Failure (Methods), Robert Boruch and Alan Ruby

�Enabling Improvements: Combining Intervention and Implementation Research

13

Meta-Analysis (Methods), Larry V. Hedges and Martyna Citkowicz

Misinformation and How to Correct It (Psychology), John Cook et al.

Youth Entrepreneurship (Psychology), William Damon et al.

Expertise (Sociology), Gil Eyal

Controlling the Influence of Stereotypes on One’s Thoughts (Psychology),

Patrick S. Forscher and Patricia G. Devine

Setting One’s Mind on Action: Planning Out Goal Striving in Advance

(Psychology), Peter M. Gollwitzer

The Evidence-Based Practice Movement (Sociology), Edward W. Gondolf

How Brief Social-Psychological Interventions Can Cause Enduring Effects

(Sociology), Dushiyanthini (Toni) Kenthirarajah and Gregory M. Walton

Quasi-Experiments (Methods), Charles S. Reichardt

Causation, Theory, and Policy in the Social Sciences (Sociology), Mark C.

Stafford and Daniel P. Mears

The Social Science of Sustainability (Political Science), Johannes Urpelainen

Translational Sociology (Sociology), Elaine Wethington

Person-Centered Analysis (Methods), Alexander von Eye and Wolfgang

Wiedermann

�

-

Enabling Improvements: Combining

Intervention and Implementation

Research

BARBARA SCHOBER and CHRISTIANE SPIEL

Abstract

Transferring evidence-based intervention programs effectively into practice and

into the wider field of public policy often fails, even if the logic of evidence-based

approaches has become highly important in recent years. As a consequence, the field

of implementation research has emerged, implementation frameworks have been

developed, and implementation studies have been conducted. However, even if

intervention research and implementation research have both achieved mentionable

progress in the past, they are rather unrelated, and different traditions and research

groups are involved. This might be one of the key reasons why there are still many

problems in transferring evidence-based programs into widespread community. In

order to enable improvement in this field, in this essay, we argue for a systematic

integration of intervention and implementation research as a promising emerging

approach. Therefore, we recommend a six-step procedure requiring researchers to

design and develop intervention programs using a field-oriented and participative

approach from the beginning on. In particular, the perspective of policymakers has

to be included as well as the wider context of values, rewarding systems, and basic

attitudes in science.

INTRODUCTION

With regard to an enormous amount of unsolved problems and demands of

the practice—not least in social contexts—a transfer of existing knowledge

and evidence from science into practice is a prominent issue. Typical

areas providing know-how that could rather directly contribute to an

optimization of the practice are, for example, the educational context and

the field of health. However, transferring scientific evidence and respective

intervention programs sustainably into practice and into the wider field of

public policy seems difficult and often does not work. As a consequence, a

new field of research has emerged: implementation science. Within this field

of research, implementation frameworks have been developed (Meyers,

Emerging Trends in the Social and Behavioral Sciences.

Robert Scott and Marlis Buchmann (General Editors) with Stephen Kosslyn (Consulting Editor).

© 2016 John Wiley & Sons, Inc. ISBN 978-1-118-90077-2.

1

�2

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

Durlak, & Wandersmann, 2012) and numerous implementation studies

have been conducted, showing for example that an active, accompanied,

long-term, and multilevel implementation approach is much more effective

than traditional forms of dissemination (Ogden & Fixsen, 2014). However,

there are still many challenges in the field of transferring evidence-based

programs into widespread community practice.

There are various reasons for these problems that could and should be

taken into account, but one very important structural constraint seems

to be rather obvious: So far, intervention research and implementation

research are not systematically connected and many different traditions and

research groups are involved. Implementation research is often mandated

and financed by parties that do not belong to the scientific community and

therefore remains rather isolated. Sometimes, it is even considered to be less

scientifically valuable than research that develops new interventions (Fixsen,

Blase, & van Dyke, 2011). This lack of anchoring of this new discipline might

be one of the key reasons why the efforts of implementation science are

not sufficiently effective so far. On the basis of this assumption, we argue

for a systematic integration of intervention and implementation research.

To realize this, we propose a six-step procedure that requires researchers to

design and develop intervention programs based on a field-oriented and

participative approach from the very beginning on. This means that the successful transfer of evidence into practice—and especially of evidence-based

intervention programs into public policy—should become more likely, if we

leave the perspective of transferring a program to practitioners just at the

end of the research process. We propose to systematically consider the needs

of the field within the whole conceptualization of an intervention as well

as during its evaluation and implementation. In this essay, we present the

baselines of such an approach and discuss its demands as a promising trend

in science (see also Spiel, Schober, & Strohmeier, 2016).

PROGRESS AND LIMITATIONS OF EVIDENCE-BASED

INTERVENTIONS AND IMPLEMENTATION RESEARCH IN THE PAST

DECADES

In the past decades, the evidence-based movement has significantly gained

impact. Especially, in Anglo-American contexts, a lot of effort was put into

making better use of research-based programs in human service areas such as

medicine, child welfare, and health (Fixsen, Blase, Naoom, & Wallace, 2009;

Spiel, 2009). A reason for this trend toward evidence-based measures might

be the massive increase in social challenges that results in the need for proven

measures to cope with them. In turn, this lack of evidence-based measures in

�Enabling Improvements: Combining Intervention and Implementation Research

3

this field points out the necessity of transferring relevant existing scientific

knowledge and evidence into practice.

One part of the growing evidence-based movement so far was to ensure

good standards of evidence, which is obviously an important prerequisite for

bringing prevention or intervention programs in the field. For example, the

Society for Prevention Research has provided standards to assist practitioners, policymakers, and administrators in determining which interventions

are efficacious, which are effective, and which are ready for dissemination

(for details, see Flay et al., 2005). The common ground of these standards is

the fact that evidence-based programs are defined by the research methodology used to evaluate them, and the definition of randomized trials as the

gold standard for evidence-based measures (Fixsen et al., 2009).

However, standards alone cannot ensure a transfer of evidence into practice; they are just one aspect of a complex process. Therefore, by focusing

on developing and differentiating criteria, the evidence-based practice

movement so far has not provided the intended benefits—at least not to

its presumably possible extent. Implementation and transfer of scientific

knowledge into practice and in the wider range of public policy has often

even failed (Fixsen et al., 2009). One important factor was that although

program evaluation became a more and more obligatory part in a many

initiatives, it was often lacking a specific and explicit study and enhancement

of the implementation processes. This was acknowledged as fundamental

deficit, based on the insight that an active, long-term, multilevel implementation approach is far more effective than passive forms of dissemination

(Ogden & Fixsen, 2014).

As a consequence, the field of implementation research has emerged (Rossi

& Wright, 1984). Fixsen, Naoom, Blase, Friedman, and Wallace (2005, p. 5)

defined implementation as the “specific set of activities designed to put into

practice an activity or program of known dimensions.” Consequently, implementation science has been defined as “the scientific study of methods to

promote the systemic uptake of research findings and evidence-based practices into professional practice and public policy” (Forman et al., 2013, p. 80).

Implementation science has grown impressively within the last years, several theoretical models and frameworks have been published and numerous

studies have been conducted. However, despite all these efforts within the

field of implementation science, there is an understanding among researchers

that the empirical support for evidence-based implementation is insufficient

so far (Ogden & Fixsen, 2014). Although there is a large body of empirical

evidence concerning the importance of implementation and growing knowledge of the contextual factors that can influence implementation, knowledge

of how to systematically increase the likelihood of high-quality implementation is still unsatisfactory (for a review, see e.g., Meyers et al., 2012).

�4

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

What are the reasons for this lack of success for this very promising

approach? On the one hand, implementation science is a very young field of

research. It exists since only some decades with a rather new focus on complex questions of interventions and evaluations and beyond. However, even

if things just need more time to come to action and to bring visible effects,

one impeding and very basic structural deficit or obstacle can be identified:

intervention research and implementation research are rather separated

and joint activities are rare (see e.g., Forman et al., 2013). Scientific intervention research and the connected activities often refer to the theory-driven

development and provision of a prevention or intervention program for

clients. Mostly voluntarily, highly motivated people or institutions (e.g.,

schools) realize the program within a clearly defined period of time. Such

programs are often evaluated within a standard evaluation design (e.g., the

comparison of different measurement groups, pre-post-follow-up designs,

focusing on different levels of effect). The evaluation focuses on questions

such as the following: Does the program work within optimal conditions?

Why does it work? Do the effects persist in the long run? Often, the work of

the respective research projects is considered to be done after investigating

these questions.

On the other hand, implementation research activity often starts just

then and works with already existing programs. It refers to actions taken

within the organizational setting to ensure that the intervention delivered to

clients is complete and appropriate—as only then the assumed effects can be

assured. Therefore, the focus is on the specific conditions of the field, in which

a measure is conducted and on the needs and competences of all stakeholders involved. Typical issues can be as follows: How to ensure the readiness

of an organization for the implementation of a program, for example, in

the sense of (sufficient) staff capacity? How is it possible to provide the

staff with the required competences effectively? Why do proven programs

sometimes exhibit unintended effects when realized in a specific setting?

Often, different research groups with different research traditions are

involved in these two tasks. Beyond, different funding structures and a

different status in science can be identified: intervention researchers are often

specialists in certain fields of health or education, funding their research

within classic scientific structures. Presently, implementation researchers

are mostly given mandates by politicians to take on the implementation

of already existing interventions. Furthermore, implementation research is

very difficult realize within the constraints of university research environments (e.g., owing to time or financial constraints) and is sometimes even

considered to be less scientifically valuable (Fixsen et al., 2011).

This presently prevailing separation of intervention and implementation

research leads to gaps within a coherent improvement process and might

�Enabling Improvements: Combining Intervention and Implementation Research

5

be the reason for diverse barriers for a successful transfer of scientific

knowledge to practice. Consequently, we suggest a systematic integration

of the two approaches. Researchers should systematically design and

develop intervention programs using a fundamentally field-oriented and

participative approach [according to the concept of use-inspired basic

research by Stokes (1997)]. This means that the specific needs of the field

and the involved stakeholders should not only be considered in the process

of implementation, transfer or scaling up, but also as part of the whole conceptualization and evaluation of an intervention (Spiel, Schober, Strohmeier,

& Finsterwald, 2011). Consequently, an intervention, its evaluation, and

implementation should be developed in an integrative way. In order to

realize this and to avoid as much presumable risks as possible, the perspective of stakeholders on all relevant levels should be included. Especially,

in fields such as education or health, the perspective of policymakers has

to be integrated explicitly and analyses of supporting or hindering factors

of evidence-based policy need to be included (Davies, 2012; Spiel et al.,

2011). Unquestionably, several researchers would argue that they already

work with these ideas in mind, but a systematic approach is missing so far.

On the basis of this diagnosis, we propose an approach for the systematic

integration of intervention and implementation research in the following

section.

A FRAMEWORK FOR AN INTEGRATION OF INTERVENTION

AND IMPLEMENTATION RESEARCH—SOME CORNERSTONES OF A

NEW APPROACH

Combining theoretical and empirical knowledge from prior research (Glasgow, Vogt, & Boles, 1999; Greenhalgh, Robert, MacFarlane, Bate, & Kyriakidou, 2004) with the arguments and desiderates described earlier, we consider

at least six deliberate parameters as constitutive components of an integrative

framework of intervention and implementation research (Figure 1). These

parameters can be considered as steps, as they mostly will occur in succession and at least partly build upon each other, even if some of them can and

might be performed simultaneously. The six steps, respectively, parameters

together must be considered as parts of a dynamic process with many subprocesses, feedback loops, and interdependencies (Spiel et al., 2016).

1. Identifying Desiderates for Research with a Focus on Social Responsibility.

In the case of an integrative approach to intervention/prevention and

implementation research, the basic step is to consciously pay attention

to relevant research topics in this context. Consequently, within this

approach, researchers working on topics relevant for interventions and

�Figure 1 Constitutive components of an integrative framework of intervention and implementation research.

Identifying desiderates for research with a focus on social responsibility

Ensuring enough valid knowledge on how to handle the problem

Identifying promising starting points for actions

Cooperating with all stakeholders

in a stable and sustainable manner

Developing measures and

their implementation together

Scaling

up the

program implementation

6

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

�Enabling Improvements: Combining Intervention and Implementation Research

7

for changes in practice should not primarily focus on research desiderates and problems arising from basic research but also on (especially

social) problems in society. This needs the basic attitude of being a

mission-driven researcher, in addition to following a curiosity-driven

approach that is widespread and highly respected in the scientific

community. Therefore, we have to deliberately extend our focus in the

process of identification of valuable research topics and combine quests

for fundamental understanding with a consideration of practical use

(Stokes, 1997). In other words, if scientists intend to contribute to this

field of research, the first step requires sociopolitical responsibility as a

basic mind-set.

2. Ensuring Enough Valid Knowledge on How to Handle the Problem. A second

decisive prerequisite for any kind of transfer is the availability of robust

and sound scientific knowledge (Spiel, Lösel, & Wittmann, 2009).

Reliable research of high scientific quality is needed—with regard

to theory and evidence. Effective interventions and evidence-based

actions in general must be based on enough reliable insights into, for

example, causal mechanisms and connections. This by no means is an

easy demand, especially if we have a look at fields such as education

or health. Just a quick glance on topics such as, for example, students’

motivation in school and how to enhance it leads to a wide body

of literature with some central and undoubted insights, but also to

still many open questions. Consequently, researchers working at the

interface between intervention and implementation have to be experts

in their fields with excellent knowledge of theory, methods, empirical

findings, and limitations.

3. Identifying Promising Starting Points for Actions. The identification of a

desiderate or problem and the availability of relevant insights for initiating changes are still not enough if one does not succeed in identifying concrete and promising starting points for interventions and their

implementation with regard to the prevailing conditions and system

characteristics. This must be emphasized, as a wide body of research

has made clear that many intervention programs and measures do not

work in any case and not at all times (Meyers et al., 2012). Here again,

a necessary condition is high expertise in the relevant scientific field.

However, this must be combined with a differentiated view on prevailing cultural and political conditions. Therefore, researchers who want to

successfully integrate intervention and implementation research need

knowledge and experience in the relevant practical field and its contextual conditions—including knowledge about potential problems and

limitations.

�8

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

4. Cooperating with all Stakeholders in a Stable and Sustainable Manner.

In order to conduct integrative intervention and implementation

research, stable alliances with all stakeholders and especially with

the relevant policymakers are necessary. However, such connections

and working structures are traditionally not established between

science, practice, and politics. Research mostly follows its own, very

intrinsic logic, which often clearly differs from necessities of the practice

and from political thinking. Therefore, a very deliberate process of

establishing cooperation and building alliances is necessary. Among

other things, this includes more awareness of policymakers’ scope of

action. Researchers in this field have to consider that there are decisive

influences on government and policy, beyond evidence. These include

values, beliefs, and ideology, which are the driving forces of many

political processes. Researchers have to keep in mind that policymaking

is highly embedded in a bureaucratic culture and is forced to respond

quickly to everyday contingencies and to often very limited resources

(Davies, 2012). Consequently, researchers have to find ways to integrate

the relevance of evidence within the context of all these influencing

factors. However, this step surely sometimes is burdensome and an

unfamiliar demand for many researchers. However, it is a crucial one

and again addresses a certain basic attitude of researchers: it requires

that researchers make their voice heard.

5. Developing Measures and Their Implementation Together. On the basis of

the four above-described steps, which in fact build the prerequisites

for this fifth one, a coordinated development and implementation of

evidence-based measures can be performed in a theory-driven, ecological, collaborative, and participatory way. This means that researchers,

who want to realize an integrative intervention and implementation

research, have to include the perspectives of all relevant stakeholders

(practitioners, policymakers, government officials, public servants,

and communities) in this development process, communicate in the

language of these diverse stakeholders and meet them as equals.

Therefore, researchers again have to consider parameters for their

research work that differs from many traditional approaches: working

together right from the beginning is not common in many fields and

also requires new conceptions of, for example, research planning

(regarding things such as the duration of project phases; see Meyers

et al., 2012). Here, one big challenge surely is to find a balance between

considering manifold needs and realize a wide participation but also

maintain scientific criteria and standards of evidence. Consequently,

researchers must have theoretical knowledge and practical experience

in their very specific field of expertise, but the required profile for a

�Enabling Improvements: Combining Intervention and Implementation Research

9

successful “integrative intervention and implementation researcher”

obviously is much wider.

6. Scaling up the Program Implementation. The final scaling up step is a

classic topic of implementation research as we know it so far. Several

fruitful models and guidelines have been proposed here, such as

Meyers et al. (2012) made evident in their review consisting of 25

frameworks. They found 14 central dimensions within these frameworks and grouped them into thematic areas: (i) assessment strategies,

(ii) decisions about adaptation, (iii) capacity-building strategies, (iv)

creating a structure for implementation, (v) ongoing implementation

support strategies, and (vi) improving future applications. According to

their synthesis, the implementation process consists of a temporal series

of these interrelated steps, which are critical to quality implementation

(see also Spiel et al., 2016).

However, different to prior concepts, in our integrative approach, the necessities and stakeholders for a scaling up are claimed to be taken into account

from beginning on.

CONCLUSIONS AND FUTURE PERSPECTIVES FOR COMBINING

INTERVENTION AND IMPLEMENTATION RESEARCH

In this essay, we propose the systematic integration of intervention and

implementation research as a promising and necessary trend for future

research. From our perspective, such an integration has the potential to

enable large-scale improvements as it supports the direct transfer of scientific knowledge to practice. However, why is this a new approach, as on

the surface, the steps seem self-evident? Furthermore, what are the special

demands for enabling future improvement based on such an approach?

Regarding the first question, one must say that obviously, most, if not

all components (both within and across the six steps), are already known

and have been considered in intervention and implementation research.

However, the new and demanding challenge is our postulation of bringing

them together in an integrative and coordinated way, in order to achieve

success. The abovedescribed approach represents a very basic but also a

very systematic research concept, which is more than purely the sum of its

steps—ignoring one aspect changes the whole dynamic. In addition, the

sound, consistent integration of intervention and implementation research

as described earlier also requires a (re)differentiation of our scientific identity

and the creation of a new, wider job description for researchers in this field.

The conceptual necessity of a basic integration directly leads to the second

question about the future demands. As it became evident above, combining

�10

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

intervention and implementation research is very demanding. Therefore,

the appropriate acknowledgement in the scientific community is essential.

Science must change its very established “provision logic” and consequently,

individual researchers cannot be the only ones engaging in this kind of

research. Universities also have to include it in their mission. We therefore

strongly recommend a discussion of success criteria in academia (Fixsen

et al., 2011). The social responsibilities of academics and universities, respectively, have to be considered more deeply. The current gratification system

in science is more oriented to basic than to applied research. Mission-driven

research picking up problems in society presently is less financed and

noticed. Consequently, the number of researchers engaged in this field is

limited—even if in the last years some advances became obvious.

All in all, some systematic changes in concepts, attitudes, and valuing structures must be considered, and the stakeholders within the action triangle

of science, practice, and policy must come to a culture of appreciation and

professionalized communication. The positive starting point is that a lot of

knowledge already exists. However, the success of effective intervention and

implementation research in the following years will depend on how we succeed in bringing a joined engagement to take social responsibility for outcomes beyond pure scientific indices or short-term political success to action.

REFERENCES

Davies, P. (2012). The state of evidence-based policy evaluation and its role in

policy formation. National Institute Economic Review, 219(1), 41–52. doi:10.1177/

002795011221900105.

Fixsen, D. L., Blase, K. A., Naoom, S. F., & Wallace, F. (2009). Core implementation components. Research on Social Work Practice, 19(5), 531–531.

doi:10.1177/1049731509335549.

Fixsen, D. L., Blase, K., & Van Dyke, M. (2011). Mobilizing communities for implementing evidence-based youth violence prevention programming: A Commentary. American Journal of Community Psychology (Special Issue), 48(1–2), 133–137.

doi:10.1007/s10464-010-9410-1.

Fixsen, D. L., Naoom, S. F., Blase, K. A., Friedman, R. M., & Wallace, F. (2005).

Implementation research: A synthesis of the literature. Tampa, FL: University of

South Florida, Louis de la Parte Florida Mental Health Institute, National

Implementation Research Network (FMHI Publication No. 231). Retrieved from

http://nirn.fpg.unc.edu/resources/implementation-research-synthesis-literature.

Flay, B. R., Biglan, A., Boruch, R. F., Castro, F. G., Gottfredson, D., Kellam, S., … Ji,

P. (2005). Standards of evidence: Criteria for efficacy, effectiveness and dissemination. Prevention Science, 6(3), 151–175. doi:10.1007/s11121-005-5553.

Forman, S. G., Shapiro, E. S., Codding, R. S., Gonzales, J. E., Reddy, L. A., Rosenfield,

S. A., … Stoiber, K. C. (2013). Implementation Science and School Psychology.

School Psychology Quarterly, 28(2), 77–100. doi:10.1037/spq0000019.

�Enabling Improvements: Combining Intervention and Implementation Research

11

Glasgow, R. E., Vogt, T. M., & Boles, S. M. (1999). Evaluating the public health impact

of health promotion interventions: The RE-AIM framework. American Journal of

Public Health, 89(9), 1322–1327. doi:10.2105/AJPH.89.9.1322.

Greenhalgh, T., Robert, G., Macfarlane, F., Bate, P., & Kyriakidou, O. (2004). Diffusion

of innovations in service organizations: Systematic review and recommendations.

The Milbank Quarterly, 82(4), 581–629. doi:10.1111/j.0887-378X.2004.00325.x.

Meyers, D. C., Durlak, J. A., & Wandersmann, A. (2012). The quality implementation

framework: A synthesis of critical steps in the implementation process. American

Journal of Community Psychology, 50(3–4), 462–480. doi:10.1007/s10464-012-9522-x.

Ogden, T., & Fixsen, D. L. (2014). Implementation science: A brief overview

and a look ahead. Zeitschrift für Psychologie, 222(1), 4–11. doi:10.1027/21512604/a000160.

Rossi, P. H., & Wright, J. D. (1984). Evaluation research: An assessment.

Annual Review of Sociology, 10, 331–352. Retrieved from http://www.jstor.org/

stable/2083179?seq=1#page_scan_tab_contents.

Spiel, C. (2009). Evidence-based practice: A challenge for European developmental psychology. European Journal of Developmental Psychology, 6(1), 11–33.

doi:10.1080/17405620802485888.

Spiel, C., Lösel, F., & Wittmann, W. W. (2009). Transfer psychologischer Erkenntnisse – eine notwendige, jedoch schwierige Aufgabe [Transfer psychological findings – a necessary but difficult task]. Psychologische Rundschau, 60(4), 257–258.

doi:10.1026/0033-3042.60.4.257.

Spiel, C., Schober, B., Strohmeier, D., & Finsterwald, M. (2011). Cooperation among

researchers, policy makers, administrators, and practitioners: Challenges and recommendations. ISSBD Bulletin, 2(60), 11–14.

Spiel, C., Schober, B., & Strohmeier, D. (2016). Implementing intervention

research into public policy – the “I3 -approach”. Prevention Science, 1–10.

doi:10.1007/s11121-016-0638-3.

Stokes, D. E. (1997). Pasteur’s quadrant. Basic science and technological innovation. Washington, D.C.: Brookings Institution Press.

FURTHER READING

Fixsen, D. L., Blase, K., Metz, A., & Van Dyke, M. (2013). Statewide implementation

of evidence-based programs. Exceptional Children (Special Issue), 79(2), 213–230.

doi:10.1177/001440291307900206.

Fixsen, D. L., Naoom, S. F., Blase, K. A., Friedman, R. M., & Wallace, F. (2005).

Implementation research: A synthesis of the literature. Tampa, FL: University of

South Florida, Louis de la Parte Florida Mental Health Institute, National

Implementation Research Network (FMHI Publication No. 231). Retrieved from

http://nirn.fpg.unc.edu/resources/implementation-research-synthesis-literature

Meyers, D. C., Durlak, J. A., & Wandersmann, A. (2012). The Quality Implementation

Framework: A Synthesis of Critical Steps in the Implementation Process. American

Journal of Community Psychology, 50(3–4), 462–480. doi:10.1007/s10464-012-9522-x.

�12

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

Spiel, C., Schober, B., Strohmeier, D., & Finsterwald, M. (2011). Cooperation among

Researchers, Policy Makers, Administrators, and Practitioners: Challenges and

Recommendations. ISSBD Bulletin 2011, 2(60), 11–14.

Spiel, C., Schober, B., & Strohmeier, D. (2016). Implementing Intervention

Research into Public Policy – the “I3 -Approach”. Prevention Science, 1–10.

doi:10.1007/s11121-016-0638-3.

BARBARA SCHOBER SHORT BIOGRAPHY

Barbara Schober is the cochair of the faculty research topic “Promotion

of lifelong learning in the educational system.” She is a member of the

management board of the Austrian Psychological Society, the senate of

the University, and international scientific and advisory boards of research

projects and journals. She follows a mission-driven research approach within

the field of “Bildung-Psychology” and is the project (co)leader of diverse

third-party-funded research projects and the (co)author of over numerous

international publications and presentations. Her research focuses on

lifelong learning, learning motivation, self-regulation, gender differences

in educational contexts, teacher training, development and evaluation of

intervention programs, and implementation research.

CHRISTIANE SPIEL SHORT BIOGRAPHY

Christiane Spiel is and has been the chair and a member of various

international advisory and editorial boards as, for example, president

of the European Society for Developmental Psychology, president of the

Austrian Psychology Association, and president of the DeGEval—Society

for Evaluation (in Germany and Austria). She was the founding dean of the

faculty of psychology at the University of Vienna and is the vice-chair of the

board of directors of the Wuppertal University in Germany. Currently, she

is one of the key authors of the International Panel on Social Progress and

member of the board of directors of the Global Implementation Initiative.

Her research topics are on bullying and victimization, lifelong learning,

integration in multicultural school classes, evaluation and intervention research, implementation science, and quality management in the

educational system.

RELATED ESSAYS

The Role of Data in Research and Policy (Sociology), Barbara A. Anderson

Can Public Policy Influence Private Innovation? (Economics), James Bessen

To Flop Is Human: Inventing Better Scientific Approaches to Anticipating

Failure (Methods), Robert Boruch and Alan Ruby

�Enabling Improvements: Combining Intervention and Implementation Research

13

Meta-Analysis (Methods), Larry V. Hedges and Martyna Citkowicz

Misinformation and How to Correct It (Psychology), John Cook et al.

Youth Entrepreneurship (Psychology), William Damon et al.

Expertise (Sociology), Gil Eyal

Controlling the Influence of Stereotypes on One’s Thoughts (Psychology),

Patrick S. Forscher and Patricia G. Devine

Setting One’s Mind on Action: Planning Out Goal Striving in Advance

(Psychology), Peter M. Gollwitzer

The Evidence-Based Practice Movement (Sociology), Edward W. Gondolf

How Brief Social-Psychological Interventions Can Cause Enduring Effects

(Sociology), Dushiyanthini (Toni) Kenthirarajah and Gregory M. Walton

Quasi-Experiments (Methods), Charles S. Reichardt

Causation, Theory, and Policy in the Social Sciences (Sociology), Mark C.

Stafford and Daniel P. Mears

The Social Science of Sustainability (Political Science), Johannes Urpelainen

Translational Sociology (Sociology), Elaine Wethington

Person-Centered Analysis (Methods), Alexander von Eye and Wolfgang

Wiedermann

�

Enabling Improvements: Combining

Intervention and Implementation

Research

BARBARA SCHOBER and CHRISTIANE SPIEL

Abstract

Transferring evidence-based intervention programs effectively into practice and

into the wider field of public policy often fails, even if the logic of evidence-based

approaches has become highly important in recent years. As a consequence, the field

of implementation research has emerged, implementation frameworks have been

developed, and implementation studies have been conducted. However, even if

intervention research and implementation research have both achieved mentionable

progress in the past, they are rather unrelated, and different traditions and research

groups are involved. This might be one of the key reasons why there are still many

problems in transferring evidence-based programs into widespread community. In

order to enable improvement in this field, in this essay, we argue for a systematic

integration of intervention and implementation research as a promising emerging

approach. Therefore, we recommend a six-step procedure requiring researchers to

design and develop intervention programs using a field-oriented and participative

approach from the beginning on. In particular, the perspective of policymakers has

to be included as well as the wider context of values, rewarding systems, and basic

attitudes in science.

INTRODUCTION

With regard to an enormous amount of unsolved problems and demands of

the practice—not least in social contexts—a transfer of existing knowledge

and evidence from science into practice is a prominent issue. Typical

areas providing know-how that could rather directly contribute to an

optimization of the practice are, for example, the educational context and

the field of health. However, transferring scientific evidence and respective

intervention programs sustainably into practice and into the wider field of

public policy seems difficult and often does not work. As a consequence, a

new field of research has emerged: implementation science. Within this field

of research, implementation frameworks have been developed (Meyers,

Emerging Trends in the Social and Behavioral Sciences.

Robert Scott and Marlis Buchmann (General Editors) with Stephen Kosslyn (Consulting Editor).

© 2016 John Wiley & Sons, Inc. ISBN 978-1-118-90077-2.

1

�2

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

Durlak, & Wandersmann, 2012) and numerous implementation studies

have been conducted, showing for example that an active, accompanied,

long-term, and multilevel implementation approach is much more effective

than traditional forms of dissemination (Ogden & Fixsen, 2014). However,

there are still many challenges in the field of transferring evidence-based

programs into widespread community practice.

There are various reasons for these problems that could and should be

taken into account, but one very important structural constraint seems

to be rather obvious: So far, intervention research and implementation

research are not systematically connected and many different traditions and

research groups are involved. Implementation research is often mandated

and financed by parties that do not belong to the scientific community and

therefore remains rather isolated. Sometimes, it is even considered to be less

scientifically valuable than research that develops new interventions (Fixsen,

Blase, & van Dyke, 2011). This lack of anchoring of this new discipline might

be one of the key reasons why the efforts of implementation science are

not sufficiently effective so far. On the basis of this assumption, we argue

for a systematic integration of intervention and implementation research.

To realize this, we propose a six-step procedure that requires researchers to

design and develop intervention programs based on a field-oriented and

participative approach from the very beginning on. This means that the successful transfer of evidence into practice—and especially of evidence-based

intervention programs into public policy—should become more likely, if we

leave the perspective of transferring a program to practitioners just at the

end of the research process. We propose to systematically consider the needs

of the field within the whole conceptualization of an intervention as well

as during its evaluation and implementation. In this essay, we present the

baselines of such an approach and discuss its demands as a promising trend

in science (see also Spiel, Schober, & Strohmeier, 2016).

PROGRESS AND LIMITATIONS OF EVIDENCE-BASED

INTERVENTIONS AND IMPLEMENTATION RESEARCH IN THE PAST

DECADES

In the past decades, the evidence-based movement has significantly gained

impact. Especially, in Anglo-American contexts, a lot of effort was put into

making better use of research-based programs in human service areas such as

medicine, child welfare, and health (Fixsen, Blase, Naoom, & Wallace, 2009;

Spiel, 2009). A reason for this trend toward evidence-based measures might

be the massive increase in social challenges that results in the need for proven

measures to cope with them. In turn, this lack of evidence-based measures in

�Enabling Improvements: Combining Intervention and Implementation Research

3

this field points out the necessity of transferring relevant existing scientific

knowledge and evidence into practice.

One part of the growing evidence-based movement so far was to ensure