Robot‐Mediated Communication

Media

Part of Robot‐Mediated Communication

- Title

- Robot‐Mediated Communication

- extracted text

-

Robot-Mediated Communication

SUSAN C. HERRING

Abstract

Since telepresence robots began entering the US commercial market over a decade

ago, telepresence robot-mediated communication (RMC) has become increasingly

prevalent and relevant. In this essay, I describe key technological properties of telepresence robots, summarize findings regarding communication and social interaction

through such robots, and propose a framework to guide future study of telepresence

robot-mediated discourse and language use. In concluding, I reimagine how telepresence robots could be reconceptualized and redesigned, for example, by moving

beyond human metaphors to incorporate “superhuman” attributes, and raise questions about the intended and unintended consequences of RMC.

WHAT IS ROBOT-MEDIATED COMMUNICATION AND WHY DOES IT

MATTER?

As robots become increasingly common human helpers and companions, the

study of human–robot interaction has surged in fields such as ergonomics,

healthcare, education, and human–computer interaction. This includes

interest in how people communicate with and through robots that are

teleoperated by humans, or what in this essay is referred to as robot-mediated

communication (RMC).

RMC is human–human communication in which at least one party is telepresent through voice, video, and motion in physical space via a remotely

controlled robot (Herring, 2015).1 Sometimes described as “videoconferencing on wheels” (Desai, Tsui, Yanco, Uhlik, 2011), RMC adds to real-time audio

and video the ability of a person to navigate and move about in a remote

physical location embodied as, or through the proxy of, a robot.2 Because of

the embodiment and enhanced control that they offer, particularly in terms of

1. Some categories of remotely operated robots, such as teleoperated service robots, robotic toys, and

androids, typically do not include video. These are not considered to be RMC platforms in this essay.

2. RMC is sometimes referred to as embodied video-mediated communication (eVMC; Tsui, Desai, & Yanco,

2012). Alternate names for telepresence robots that can be found in the literature include personal roving

presence (ProP; Paulos & Canny, 1998), mobile remote presence (MRP; e.g., Takayama & Go, 2012), and

mobile robotic telepresence (MRP; Kristoffersson, Coradeschi, & Loutfi, 2013).

Emerging Trends in the Social and Behavioral Sciences.

Robert Scott and Marlis Buchmann (General Editors) with Stephen Kosslyn (Consulting Editor).

© 2016 John Wiley & Sons, Inc. ISBN 978-1-118-90077-2.

1

2

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

mobility, telepresence robots can provide a richer sense of “being there” than

online videoconferencing technologies such as Skype. They enable a user not

only to communicate at a distance but to be virtually “in two places at once.”

The term RMC was coined by analogy with “computer-mediated communication” (CMC), which refers to human–human communication mediated by

networked computers—especially the Internet—and other digital media.

In that RMC mediates human–human communication and supports social

as well as task-related interaction, it resembles textual modes of CMC such

as email, text messaging, and (micro)blogging; graphical avatar-mediated

communication in virtual worlds; and online audio and video chat. Unlike

most CMC applications, however, RMC is asymmetrical, in that one person

is telepresent via a robot (henceforth, the pilot) while others are physically

present (henceforth, the local interlocutors).3

Not much research on RMC has been carried out by communication scholars to date.4 One reason is that although telepresence robots have existed

since the late 1990s (Paulos & Canny, 1998), high-definition video transmission and remote navigation use a great deal of wireless bandwidth,5 and it

has only become feasible to deploy such robots in real-world contexts since

fiber optic technologies expanded Internet bandwidth in the mid-2000s. The

first commercial telepresence robots were mobile robotic platforms designed

for use by physicians in medical settings. In the past few years, the number

of commercially available telepresence robots has grown,6 and research and

development are accelerating apace.

A consequence of this growth is that RMC is becoming increasingly

prevalent and socially relevant. More people are using telepresence robots

and producing RMC in an expanding range of contexts: business, educational, medical, and social. Telepresence robots are also used in security

and high-risk operations such as surveillance, mining, and search and

rescue, where it would be tedious or unsafe to send humans in; however, as

communication is secondary in such uses (if it is relevant at all), they are not

considered further here.

I research and use RMC in my professional academic life. The discussion

that follows reflects my experiences piloting a variety of telepresence robots

3. It is possible for all parties to be telepresent through multiple robots, but unless the robots need to

act in or on the physical space, it requires fewer resources and less effort for multiple remote interlocutors

to interact in virtual space.

4. An exception is the work of Leila Takayama (Lee & Takayama, 2011; Rae, Takayama, & Mutlu, 2013;

Takayama & Go, 2012), who uses the term RMC in several of her publications.

5. Bandwidth refers to the data throughput capacity of any communication channel

(http://www.encyclopedia.com/topic/Bandwidth.aspx, retrieved December 11, 2015).

6. Some of the more affordable ($2000–$3000) telepresence robots produced in the United States are

the Double, the TeleMe, and the Beam+. More expensive models (ranging from $7000 to $70,000) include

the VGo, the BeamPro, and the iRobot Ava. Recently, cheaper models produced overseas have entered the

market, including the Chinese PadBot (listed at $950) and the Russian Synergy Swan (listed at $999). Prices

are as of February 2016.

Robot-Mediated Communication

3

(in addition to several that I own) as well as the literature on telepresence

robots and RMC. After briefly describing key technological properties

of telepresence robots, I summarize what research has found regarding

communication and social interaction through such robots, or what I

call RMC broadly construed. Data-driven analysis of the actual, situated,

and embodied communicative practices of interlocutors in telepresence

robot-mediated conversations, or RMC narrowly construed, is largely

lacking so far. Thus, I propose a framework with questions and methods to

guide future study of telepresence robot-mediated discourse and language

use. In the final section, I consider ways in which telepresence robots could

be reconceptualized and redesigned, and raise questions about the intended

and unintended consequences of RMC.

TECHNOLOGICAL PROPERTIES OF TELEPRESENCE ROBOTS

When people think of robots, they tend to think first of autonomous robots

such as R2-D2 and C-3PO in the Star Wars movies. Autonomous robots

depend on preprogrammed commands and artificial intelligence, and they

are limited in their ability to communicate compared to human beings. In

contrast, telepresence robotics is a form of robotic remote control by a human

operator that is used to facilitate geographically distributed communication.

Telepresence robot-mediated interaction is intended to simulate face-to-face

(f2f) communication, and its success (or failure) is typically evaluated in

comparison to f2f interaction. That said, the line between telepresence and

autonomous robots often blurs, as when autonomous features, such as

obstacle avoidance, are incorporated into human-piloted robots.

There are “child-sized” telepresence robots (such as the Telenoid, developed in Japan, which is intended to be held in the local user’s arms) and

smaller table-top devices (such as the Kubi and the MeBot). The focus of

this essay is on “adult-sized” telepresence robots, which are the most widely

deployed and the most studied to date. The following descriptions pertain

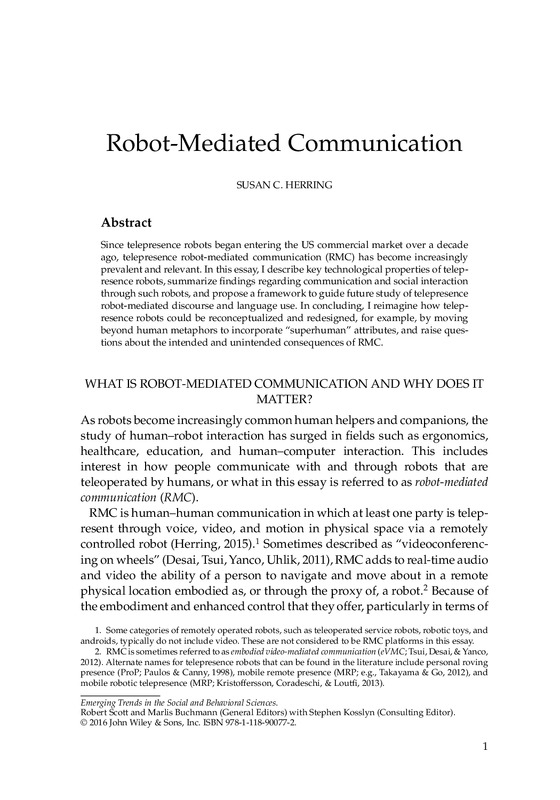

especially to the adult-sized telepresence robots that are currently commercially available in the United States (referred to for convenience simply as

“telepresence robots” or “robots” henceforth), as exemplified by the VGo,

the QB, the BeamPro, the Beam+, the Double, and the iRobot Ava (Figure 1).

These devices are shaped and constrained by a specific set of technological

properties:

Embodiment. A telepresence robot can be designed to look like a human

being, but most versions in use today are not. The “head” of the typical

telepresence robot is (or includes) a video monitor; some robots have

4

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

iRobot Ava

Double

Beam+

QB

VGo

BeamPro

Figure 1 Some commercial telepresence robots.

an iPad or Android tablet as their video monitor. The head and shoulders of the pilot are typically visible in the video screen. The robot’s

“body,” in contrast, is often little more than a vertical column mounted

on a wheeled base, as shown in Figure 1. Robotic arms, because they are

difficult to create and expensive to produce, usually are not included,

although some designs include pointing devices.7

Size. Especially if the intended use is in a work environment, the general

sense among designers is that the robot should be large enough to be

taken seriously, but at the same time, not so large as to be perceived as

intimidating or dangerous. That said, current commercial models range

widely from 11 to 186 pounds, with heights from 2.8 to 6 feet, corresponding roughly to the height of a seated or a (short) standing adult

human. Some robots allow the pilot to adjust the robot’s height while

in use.

Movement. The main movement of telepresence robots is rolling about

in physical space. Similar to audio- and video-conferencing, most

telepresence robots operate through a WiFi (wireless) network. The

pilot remotely controls the movement of the robot through a computer

interface that features various navigation controls and indicators; some

robots can also be controlled via touch screen or a joystick. Today’s

robots typically have speeds equivalent to a (slow) walking pace.

Some can automatically detect obstacles and edges (such as stairs) and

prevent the robot from rolling into them. Some, such as the iRobot

Ava, are equipped with automated point-to-point navigation. These

automated features let the pilot focus less on navigation and more on

communication.

7. The early PRoP robot, for example, included a two-degrees-of-freedom pointer “so that remote

users [could] point as well as make simple motion[s]” (Paulos & Canny, 1998).

Robot-Mediated Communication

5

Audio and Video. The pilot “sees” through the robot’s cameras and “hears”

through its microphones, like in video conferencing. According to

Neustaedter, Venolia, Procyk, and Hawkins (2016), the head camera on

the BeamPro, a high-end telepresence robot, provides the equivalent of

20/200 vision. Peripheral vision is also typically limited, and in robots

that use one head camera, depth perception is lacking. To partially

compensate for these limitations, some head cameras include a zoom

feature, and some pan and tilt. Some robots are also equipped with a

camera that provides views down to the base to aid in navigation. As

in videoconferencing, the pilot sees a small picture-in-picture image of

him- or herself in the control interface; however, auditory feedback is

often lacking.

Message Transmission. Telepresence robots support synchronous,

ephemeral, two-way, voice-based communication, similar to audio–

video conferencing and f2f communication. Some also let the pilot

leave text on the robot’s video screen, and my VGo converts text typed

by the pilot into speech by the robot, which can be useful as a backup

when audio transmission problems occur.

These properties have consequences for RMC, both broadly and narrowly

construed.

COMMUNICATION AND SOCIAL INTERACTION THROUGH

TELEPRESENCE ROBOTS

The primary aim of telepresence robots is to foster social interaction between

individuals, or RMC broadly construed. That aim is often thwarted in practice, however, by network problems that result in unsynchronized audio and

video streams or loss of network connectivity. The robot may bump into

things or stall in the middle of a hallway, and audio or video may break up or

be temporarily lost. Audio is more important than video in conveying a sense

of presence; it is also essential for verbal interaction. I have found through

experience that poor audio input quality in combination with limited vision

can make locating and identifying the source of voices in the local environment challenging. Laggy audio can also compromise fundamental aspects

of turn-taking and interruptions (O’Conaill, Whittaker, & Wilbur, 1993). As

Desai et al. (2011) incisively conclude, “audio issues [can] make it difficult to

have any conversation, let alone a natural conversation.”

One must look beyond the present technical limitations of telepresence

robots, however, to appreciate their communicative potential. When the

technology works like it is supposed to, RMC has been found to be more

casual and sociable than video conferencing. Telepresence robots that were

6

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

used in technology workplaces for a year or more were found to enhance

“impromptu work meetings” (especially to ask questions, exchange ideas,

and get answers), “being available,” “planned social meetings,” “planned

work meetings,” “seeking people,” and “greeting/socializing” (Lee &

Takayama, 2011). Tsui et al. (2012) found that the novelty effect of using a

telepresence robot wears off quickly, within 15 minutes. Sometimes, the

robot becomes effectively “invisible-in-use” (Takayama & Go, 2012), such

that the remote user and the local interlocutor(s) experience the subjective

illusion of talking f2f.8 Some pilots identify with the robots as themselves,

to the point that they feel that their personal space is violated when a

local user approaches too close or touches their robot. These findings lend

support to Lee and Takayama (2011)’s claim that RMC blurs “boundaries …

between person and machine, physical and virtual, and being here vs. being

elsewhere.”

At the same time, RMC can be socially awkward, and new norms of interaction must be negotiated as pilots and local interlocutors mutually adjust to

the technological properties of telepresence robots. For example, local interlocutors are often unsure how to deal with a stalled robot, and may assume

that the interaction has ended and walk away, rather than waiting a few

moments for the pilot to reestablish a network connection or moving the

robot into range of a WiFi hot spot. The robot may impede normal human

traffic flow in a building or block others’ view in meetings or at conferences

without the pilot being aware of it, owing to the robot’s limited range of

vision. The pilot may misgauge social distance due to a lack of depth perception and position the robot too close or too far away from an interlocutor;

may talk too loudly, owing to a lack of audio feedback; or may linger too long

after a conversation, owing to missed social cues (Lee & Takayama, 2011).

Unless they understand the robot’s technological limitations, local interlocutors may interpret these behaviors as socially inappropriate or rude on the

part of the pilot.

Locals may also ascribe social meaning to the robot’s size. Robot height

influenced local interlocutors’ perceptions of leadership effectiveness in a

study by Rae, Takayama, and Mutlu (2013), with robots that were taller

than locals sitting down perceived as more leader-like than robots that were

shorter than the seated locals.9 Kristofferson et al. (2013) found that “people

of different [height] preferred robots of different height and adjusted their

distance to them accordingly.” This may have stemmed from a desire to

8. Paradoxically, realistically humanoid (e.g., android-style) robots can distract and detract from the

interaction experience (Mutlu, Yamaoka, Kanda, Ishiguro, Hagita, 2009), possibly because they only

project the pilot’s voice and movements, and not his or her face. Video is useful in “provid[ing] subtle

information about the motions, actions, and changes at the remote location” (Paulos & Canny, 1998).

9. Girth also matters, it seems. Leila Takayama (personal communication) reports that the thinner

robots have less presence than the wider, more substantial ones.

Robot-Mediated Communication

7

look the pilot in the eye as much as possible, or to adjust for the perceived

or desired power distance between the pilot and the local interlocutors.

The embodied nature of the robot is also relevant to issues of identifiability

and anonymity. More than one person can pilot a robot (albeit not at the same

time), and one person can pilot multiple robots. When more than one person

uses the same robot or the same kind of robot, the anonymous appearance

of the robot can make the pilot difficult to identify, especially from behind.

Locals sometimes dress or decorate the robot, for example, with a hat, t-shirt,

or scarf, to associate it with particular pilots (Neustaedter et al., 2016). The

robot’s anonymous appearance may be advantageous, however, in circumstances where the remote participants wish to avoid drawing attention to

themselves.

Ambiguity may also arise about a moving robot’s intent, owing to gaze misalignment and a lack of gestural cues—does the pilot want to chat, or is he

or she just trying to pass by? (Neustaedter et al., 2016). As in video conferencing, the pilots’ cameras are often facing in a different direction than their

video image appears to be looking (Lee & Takayama, 2011), and thus the subtle cues that are normally exchanged via gaze f2f are not available in RMC.

The pilot’s gestures are also less visible. Some researchers have suggested

that because facial expressions and nonverbal gestures are not as salient,

telepresence-mediated interaction feels less “real” than f2f communication

(Mantei et al., 1991). My own experience piloting telepresence robots is that

I am “present” at the remote location but not as present as if I were in my

physical body. This too can have advantages: I have observed that locals’

behavior tends to be more unguarded around my robot than it would be if

I were physically present, and it is easier to observe others in social interactions when less focus is on me, as tends to occur once the novelty of the robot

avatar wears off. Indeed, some pilots move the robot from side to side just to

remind locals that they are there.10

Although lacking in the ability to produce human-like social cues, telepresence robots have other ways of signaling intention, such as flashing lights or

gesturing with a laser pointer, and these can develop conventionalized meanings. The robot’s mobility can be a form of body language for starting and

stopping conversations; for example, slight movements may indicate the end

of a conversation (Neustaedter et al., 2016). Simply turning the robot’s head

or body to face the intended addressee is often an effective way to engage.

In multiparty interactions, one study found that turning to look at a current

speaker resulted in more and longer conversational turns. At the same time,

“swiveling toward one [local] participant often meant that another [local]

participant was left looking at the edge of the display screen” (Sirkin et al.,

10. Leila Takayama, personal communication.

8

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

2011), and the time required to rotate the display introduced delay into the

conversation, disturbing its natural flow.

Related to movement, the robot’s lack of arms means that it requires

help to manipulate its surroundings (e.g., opening doors, pressing elevator

buttons, moving objects), and this must be negotiated between pilot and

locals. Because of this, some pilots report feeling like they are disabled

(Lee & Takayama, 2011).11 Locals may share this perception too. Takayama

and Go (2012) found that people have different metaphors for telepresence

robots, and their social expectations depend on those metaphors. Locals who

view the robot as a machine, purely a mediating technology, tend to have

lower social expectations of it; they are more likely to help the robot, while

feeling less constrained by f2f norms of politeness. For instance, they might

not consider it rude to put their feet up on the robot’s base while conversing

with it, or to mute the telepresence robot from the local side (Takayama

& Go, 2012). In contrast, people who think of the robot as a person and a

social actor tend to consider such behaviors impolite. Many subjects who

thought of the robot as a person in Takayama and Go’s study thought of it as

a disabled person. The implications of the disability metaphor for politeness

and helpful behavior have not been studied yet, however.

LANGUAGE AND DISCOURSE IN ROBOT-MEDIATED

COMMUNICATION

“The most important component of communicating through a telepresence

robot is the conversation itself” (Tsui et al., 2012), or RMC narrowly construed. Yet although language and discourse in CMC has been described

extensively,12 almost no study has been made of the linguistic choices that

interlocutors make in RMC—their patterns, their variations, their intended

meanings, or their pragmatic effects. Moreover, no research has been based

on close analysis of a corpus of actual RMC. Is language use more or less formal in RMC than in f2f? How do others refer to the robot—as “you,” “s/he,”

or “it”—and what factors condition variation in reference? How does the

limited mobility and range of visibility of pilots affect their ability to attract

attention, gain and hold the conversational floor, and time turn-taking appropriately? What is the social and hierarchical status of the pilots: Are they

taken less seriously when they are in positions of authority? Do they receive

politeness and deference the same as if they were physically present, and to

what extent does this vary by gender and culture—theirs and that of their

local interlocutors?

11. In contrast, some physically disabled users, who otherwise might not be able to move about a

remote site, experience robotic embodiment as empowering (Neustaedter et al., 2016).

12. For a recent overview, see Herring and Androutsopoulos (2015).

Robot-Mediated Communication

9

As a framework that could be used to guide exploration of these and related

questions in the pilot’s and the local interlocutors’ discourse, I identify five

categories of language use in RMC that could fruitfully be researched, organized from smallest to largest linguistic units and from least to most context

dependent, with sample phenomena of potential interest listed for each category. Each category could be addressed by existing linguistic methods of

analysis, modified to take into account the mediating properties of telepresence robots.13 (Italics indicate phenomena that have been touched upon in

the RMC literature.)

Structure. RMC- and context-specific conventions of language use; word

frequencies; formality; organization of speaking turns, exchanges, and

conversations; disfluencies; intonation and volume; gesture.

Meaning: Word Choice/Use. Discourse topics (What is the talk about?); interactive personal pronouns (e.g., “I”, “we”, “you”) and forms of reference

(“s/he”, “it”, “the robot”, etc.); vocabulary diversity and size; metaphor

use.

Meaning: Pragmatics. Speech acts (What are the speakers doing through

language?); determining intention; observations and violations of conversational maxims; politeness; topic initiation, development, and termination; deixis; presupposition, implicature, and so on.

Interaction Management. Attention-getting; greeting; leave-taking (termination of interaction); preferred and dispreferred responses; turn-taking;

back-channeling; pausing/silence/inactivity [verbal vs multimodal

(cf. Licoppe & Morel, 2012)]; conversational repair; gaze; orientation to

current speaker.

Social Phenomena. Stylistic differences according to gender, age, socioeconomic status, culture, role, experience with robots, and so on;

self-presentation and self-revelation; lying and deception; playful

behavior; giving and receiving support; accommodation; conflict and

conflict management; negotiation (e.g., around use of shared space);

power/leadership; influence; deference, and so on.

These categories are not discrete; a single phenomenon could be addressed

on more than one linguistic level. Rather, the categories are intended as different lenses through which RMC can be viewed. Each lens brings into view

different questions, methods, and theoretical perspectives.

Naturally occurring robot-mediated interactions constitute the most

authentic data for studying language use in RMC. There are privacy issues

13. The organization of these categories follows that for computer-mediated discourse analysis, a

paradigm developed for textual CMC (Herring, 2004).

10

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

associated with collecting such data, though, as it could be perceived as

mobile surveillance. Moreover, unlike asynchronous web communication,

RMC is not self-archiving; the researcher needs to devise methods of recording, transcription, and presentation for information not normally found in

CMC, such as movement and gaze direction. Nonetheless, close analysis of

language and discourse in RMC is an important and fruitful direction to

pursue in future research.

Experimental methods are also valuable—for example, for comparing

RMC, both narrowly and broadly construed, with other modes of communication. Such comparisons would spotlight different effects of robot

mediation: RMC compared with f2f communication would shed light

on the effects of the robot proxy; RMC versus video-mediated communication would illuminate the effects of ambulation; and RMC versus

avatar-mediated communication would highlight the differential effects of

physical and virtual environments, for instance.

Finally, surveys and interviews are useful for querying participants

directly about RMC. In addition to their linguistic and social perceptions,

local participants could be asked to what extent they felt that the pilot was

present in a given interaction, toward the larger goal of understanding what

circumstances contribute to creating the effect of “invisible-in-use” robotic

telepresence.

THE FUTURE OF TELEPRESENCE ROBOTICS AND RMC

Referring to autonomous robots, Nourbakhsh (2013, p. xv) recently wrote,

“[T]he ambitions of robotics are no longer limited to imitating [human

beings]. … We have invented a new species, part material and part digital,

that will eventually have superhuman qualities.” Similarly, telepresence

robots need not be limited by “human” metaphors. Present iterations

already have “superhuman” abilities, compared with what is possible in

f2f communication: They enable a person to be in two (or more) places

simultaneously, and they provide mobility across great distances to the

mobility impaired. The first telepresence robot, Eric Paulos’s ProP, could

also float through the air.

In theory, nearly any autonomous robot in use or development today could

become a telepresence device with the addition of two-way audio–video

communication. Communicating remotely with other people through

“giant, military robo-dogs”14 (Nourbakhsh, 2013) is neither necessary nor

desirable, but the human-pilotable flying robots known as drones could

make useful communication devices, in addition to being able to navigate

14. A reference to Boston Dynamics’ “Big Dog” military robot. See: http://www.bostondynamics.

com/robot_bigdog.html.

Robot-Mediated Communication

11

outdoors over uneven terrain. Other nonhuman robotic designs suggest

interesting possibilities for remote human interactions, as well, ranging from

telepresence robots in the form of normal-sized dogs (to keep company with

and comfort the ill and elderly) to robots with multiple, highly specialized

arms (for remote surgical procedures).

To enhance robot-enabled multimodal, multicontinental telepresence,

future robots could include built-in navigation and map-creation technology; automated speech translation across languages; augmented reality

technology that overlays the video with information about current or

anticipated interlocutors drawn from an Internet database; and sensors to

collect information about the remote environment, ranging from proxemic

information about when a person is trying to squeeze by to information

about interlocutors’ emotional states. The robots could change appearance

according to who is piloting them at the moment. For example, they could

include screens upon which holographic images are projected, so that a

moving, speaking, three-dimensional representation of the pilot’s physical

self is visible in the remote environment. Making the remote pilot’s identity

readily visible is one way to encourage more human–human interactions,

and making the remote pilot visible from all angles would make bystanders

more likely to enter into conversations (Takayama & Go, 2012). Pilots,

at their end, could wear virtual reality gear to simulate an experience of

immersion in the remote environment, and their body motions could be

tracked and mirrored in the remote robot. The robots themselves could be

flexible, pliable, and gentle to the touch.

Meanwhile, the telepresence literature is filled with recommendations

for ways to improve the current paradigm of “videoconferencing on

wheels.” More and better cameras would provide the pilots with better

situation awareness (Desai et al., 2011). Convex video displays could afford

a wider range of directing a pilot’s gaze while not “turning her back” on

some participants (Sirkin et al., 2011). Some current video conferencing

systems use audio location to work out who is speaking and then focus

cameras on that speaker (Yoshimi & Pingali, 2002); this could also be

done for robots. A screen with a camera embedded in the middle would

aid interlocutors in establishing eye contact (Kristoffersson et al., 2013).

Features to provide remote pilots with more feedback about how they

are presenting themselves—for example, providing mechanisms to help

monitor their volume levels, monitor their appearance, and communicate

nonverbally—could improve the user experience for both remote pilots and

locals (Lee & Takayama, 2011).

The robot’s base could have treads that would allow it to roll over curbs

and climb stairs (Paulos & Canny, 1998). Lasers could assist navigation when

passing through doorways and while driving down hallways; for example,

12

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

if the robot drives at an angle toward a wall, the robot could autonomously

correct its direction (Desai et al., 2011). Autonomous navigation is desirable in

general for safety reasons, for ease of use, and to reduce social awkwardness

associated with bumping into walls and other objects. In studies by Desai

et al. (2011), a “follow person” behavior and a “go to destination” mode were

rated as potentially quite useful. However, automation raises issues of ethics

and legal liability. As Takayama and Go (2012) ask, “If a semi-autonomously

navigating MRP (mobile remote presence) system bumps into a person or

damages valuable furniture, who is to blame?” Adjustable autonomy would

allow the pilot to select from a range of autonomous behaviors or levels

according to circumstances.

At present, the main bottlenecks to the widespread adoption of telepresence robots are Internet/WiFi reliability and the cost of acquiring units. Costs

are already dropping as new models appear on the market.15 As for lost WiFi

connection due to limitations in range of WiFi and “network shadow” caused

by metal objects such as elevators and lockers, Kristoffersson et al. (2013)

propose automatically “reversing the [robot’s] motion in slow speed until

sufficient access to the WiFi is recovered.” They also suggest adding a light

or backup beep “to indicate the robot’s intention.”

Some suggestions from the research literature have already been implemented in robotic prototypes. Articulating arms, for instance, have been

implemented on the MeBot V4 tabletop telepresence robot. The robot has

two arms with three degrees of freedom: shoulder rotation, shoulder extension, and elbow extension; the arm movements are directly controlled by the

pilot, who adjusts the joints on a passive model of the robot (Kristoffersson

et al., 2013). The researchers who designed the MeBot V4 found that local

users rated it as more engaging and likable than a similar static robot design.

The success of this prototype raises the possibility that a similar approach

could be adapted to adult-sized robots, although body tracking would be

more direct. However they are implemented, remotely controllable arms

would greatly increase the ability of telepresence robots to interact with

and in remote environments, rendering them both more sociable (e.g., able

to gesture, shake hands, and hug) and less “disabled” and dependent on

assistance from local interlocutors (e.g., to press elevator buttons and open

doors).

As Nourbakhsh rightly observes, robot proxies expand our physical space

and reach. At present, the implications of that expansion have only begun

to be felt, implications that extend beyond the fields of technology design,

human–robot interaction, and CMC into the social and behavioral sciences,

and from there into any number of applied domains. Rae, Venolia, Tang,

15. See note 6.

Robot-Mediated Communication

13

and Molnar (2015) caution that designers and researchers should keep in

mind what future they are trying to invent with telepresence. Because the

focus of this essay is on communication, I have not considered other ways in

which telepresence extends human experience that do not primarily involve

human–human communication, including actions that would be impossible in one’s physical body such as extreme mountain climbing, or perceiving/feeling through the eyes/body of another person wearing a telerobotic

skin. Such applications of telerobotics raise fascinating questions in their own

right.

As regards RMC, researchers need to consider the nature of the communication that telepresence robots are used to support, between what kinds

of communicators, for what purposes, and in what contexts? Beyond these

primary, or intended uses, what secondary or unintended consequences

might follow from RMC? The popular media are filled with hype and

warnings about our future coexistence with robots. Telepresence robots are

human proxies, not autonomous machines; nonetheless, their impact if they

come into widespread use could be as great as that of autonomous robots.

This is all the more true as the line between autonomous and teleoperated

robots continues to blur.

ACKNOWLEDGMENTS

Many thanks to Jeannie Fox Tree, Rob Martin, Leila Takayama, and Steve

Whittaker for their valuable input on this essay.

REFERENCES

Desai, M., Tsui, K. M., Yanco, H. A., & Uhlik, C. (2011). Essential features of telepresence robots. In Proceedings of the IEEE international conference on technologies for

practical robot applications (TePRA ’11), (pp. 15–20). Los Alamitos, CA: IEEE.

Herring, S. C. (2004). Computer-mediated discourse analysis: An approach to

researching online behavior. In S. A. Barab, R. Kling & J. H. Gray (Eds.), Designing for virtual communities in the service of learning (pp. 338–376). New York, NY:

Cambridge University Press.

Herring, S. C. (2015). New frontiers in interactive multimodal communication. In A.

Georgapoulou & T. Spilloti (Eds.), The Routledge handbook of language and digital

communication (pp. 398–402). London, England: Routledge.

Herring, S. C., & Androutsopoulos, J. (2015). Computer-mediated discourse 2.0. In

D. Tannen, H. E. Hamilton & D. Schiffrin (Eds.), The handbook of discourse analysis

(Vol. ,2nd ed., pp. 127–151). Chichester, England: John Wiley & Sons.

Kristoffersson, A., Coradeschi, S., & Loutfi, A. (2013). A review of mobile robotic

telepresence. Advances in Human-Computer Interaction, 2013, article 3.

14

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

Lee, M. K., & Takayama, L. (2011). “now, i have a body”: Uses and social norms for

mobile remote presence in the workplace. In Proceedings of CHI 2011 (pp. 33–42).

New York, NY: ACM.

Licoppe, C., & Morel, J. (2012). Video-in-interaction: “Talking heads” and the multimodal organization of mobile and Skype video calls. Research on Language and

Social Interaction, 45(4), 399–429.

Mantei, M. M., Baecker, R. M., Sellen, A. J., Buxton, W. A. S., Milligan, T., & Wellman,

B. (1991). Experiences in the use of a media space. In Proceedings of CHI 1991 (pp.

203–208). New York, NY: ACM.

Mutlu, B., Yamaoka, F., Kanda, T., Ishiguro H., & Hagita, N. (2009). Nonverbal

leakage in robots: Communication of intentions through seemingly unintentional

behavior. In HRI’09: Proceedings of the 4th international conference on human robot

interaction (pp. 69–76). New York, NY: ACM.

Neustaedter, C., Venolia, G., Procyk, J., & Hawkins, D. (2016). To beam or not to

beam: A study of remote telepresence attendance at an academic conference. In

Proceedings of ACM conference on computer supported cooperative work. New York,

NY: ACM.

Nourbakhsh, I. R. (2013). Robot futures. Cambridge, MA: MIT Press.

O’Conaill, B., Whittaker, S., & Wilbur, S. (1993). Conversations over video conferences: An evaluation of the spoken aspects of video-mediated communication.

Human-Computer Interaction, 8, 389–428.

Paulos, E., & Canny, J. (1998). PRoP: Personal roving presence. In CHI ’98 Proceedings of the conference on human factors in computing systems (pp. 296–303). ACM

Press/Addison-Wesley Publishing Co. New York, NY, USA.

Rae, I., Takayama, L., & Mutlu, B. (2013). The influence of height in robot-mediated

communication. In Proceedings of the 8th ACM/IEEE international conference on

human–robot interaction (HRI ’13) (pp. 1–8). Piscataway, NJ: IEEE Press.

Rae, I., Venolia, G., Tang, J. C., & Molnar, D. (2015). A framework for understanding

and designing telepresence. In Proceedings of the 18th ACM conference on computer

supported cooperative work and social computing (CSCW ’15) (pp. 1552–1566). New

York, NY: ACM.

Sirkin, D., Venolia, G., Tang, J., Robertson, G., Kim, T., Inkpen, K. . . . Sinclair, M. (2011). Motion and attention in a kinetic videoconferencing proxy. In

Human-computer interaction – INTERACT 2011. Lecture notes in computer science (Vol. 6946) (pp. 162–180). Berlin, Germany: Springer.

Takayama, L., & Go, J. (2012). Mixing metaphors in mobile remote presence. In Proceedings of computer supported cooperative work (CSCW ’12) (pp. 495–504). New York,

NY: ACM.

Tsui, K. M., Desai, M., & Yanco, H. A. (2012). Towards measuring the quality of

interaction: Communication through telepresence robots. In Proceedings of the performance metrics for intelligent systems (pp. 101–108). New York, NY: ACM.

Yoshimi, B., & Pingali, G. (2002). A multimodal speaker detection and tracking system for teleconferencing. In Multimedia ’02: Proceedings of the tenth ACM international conference on multimedia (pp. 427–428). New York, NY: ACM.

Robot-Mediated Communication

15

SUSAN C. HERRING SHORT BIOGRAPHY

Susan C. Herring is Professor of Information Science and Linguistics and

Director of the Center for Computer-Mediated Communication at Indiana

University Bloomington. Trained in linguistics at the University of California at Berkeley, she was one of the first scholars to apply linguistic methods of analysis to computer-mediated communication (CMC). Her research

has focused on structural, pragmatic, interactional, and social phenomena

in digital communication, especially as regards gender issues. Her recent

interests include online multilingualism, multimodal CMC, and telepresence

robot-mediated communication.

Professor Herring is a past editor of the Journal of Computer-Mediated

Communication and currently edits the online journal Language@Internet.

Her publications include numerous scholarly articles on CMC and three

edited volumes: Computer-Mediated Communication: Linguistic, Social and

Cross-Cultural Perspectives (Benjamins, 1996), The Multilingual Internet:

Language, Culture, and Communication Online (Oxford University Press, 2007,

with B. Danet), and The Handbook of Pragmatics of Computer-Mediated Conversation (Mouton, 2013, with D. Stein and T. Virtanen). Professor Herring is

a past Fellow of the Center for Advanced study in the Behavioral Sciences

at Stanford University (CASBS) and has been a Visiting Researcher in the

Psychology Department at the University of California, Santa Cruz.

Webpage: http://info.ils.indiana.edu/∼herring/

CV: http://info.ils.indiana.edu/∼herring/cv.html

Center for Computer-Mediated Communication: https://ccmc.ils.indiana.

edu

RELATED ESSAYS

Empathy Gaps between Helpers and Help-Seekers: Implications for Cooperation (Psychology), Vanessa K. Bohns and Francis J. Flynn

Identity Fusion (Psychology), Michael D. Burhmester and William B. Swann

Jr.

Mental Models (Psychology), Ruth M. J. Byrne

Authenticity: Attribution, Value, and Meaning (Sociology), Glenn R. Carroll

Spatial Attention (Psychology), Kyle R. Cave

Misinformation and How to Correct It (Psychology), John Cook et al.

Cognitive Processes Involved in Stereotyping (Psychology), Susan T. Fiske and

Cydney H. Dupree

The Development of Social Trust (Psychology), Vikram K. Jaswal and Marissa

B. Drell

16

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

The Neurobiology and Physiology of Emotions: A Developmental Perspective (Psychology), Sarah S. Kahle and Paul D. Hastings

Reconciliation and Peace-Making: Insights from Studies on Nonhuman Animals (Anthropology), Sonja E. Koski

The Psychological Impacts of Cyberlife Engagement (Psychology), Virginia S.

Y. Kwan and Jessica E. Bodford

Neural and Cognitive Plasticity (Psychology), Eduardo Mercado III

Gestural Communication in Nonhuman Species (Anthropology), Simone Pika

Class, Cognition, and Face-to-Face Interaction (Sociology), Lauren A. Rivera

Understanding Biological Motion (Psychology), Jeroen J. A. Van Boxtel and

Hongjing Lu

Speech Perception (Psychology), Athena Vouloumanos

-

Robot-Mediated Communication

SUSAN C. HERRING

Abstract

Since telepresence robots began entering the US commercial market over a decade

ago, telepresence robot-mediated communication (RMC) has become increasingly

prevalent and relevant. In this essay, I describe key technological properties of telepresence robots, summarize findings regarding communication and social interaction

through such robots, and propose a framework to guide future study of telepresence

robot-mediated discourse and language use. In concluding, I reimagine how telepresence robots could be reconceptualized and redesigned, for example, by moving

beyond human metaphors to incorporate “superhuman” attributes, and raise questions about the intended and unintended consequences of RMC.

WHAT IS ROBOT-MEDIATED COMMUNICATION AND WHY DOES IT

MATTER?

As robots become increasingly common human helpers and companions, the

study of human–robot interaction has surged in fields such as ergonomics,

healthcare, education, and human–computer interaction. This includes

interest in how people communicate with and through robots that are

teleoperated by humans, or what in this essay is referred to as robot-mediated

communication (RMC).

RMC is human–human communication in which at least one party is telepresent through voice, video, and motion in physical space via a remotely

controlled robot (Herring, 2015).1 Sometimes described as “videoconferencing on wheels” (Desai, Tsui, Yanco, Uhlik, 2011), RMC adds to real-time audio

and video the ability of a person to navigate and move about in a remote

physical location embodied as, or through the proxy of, a robot.2 Because of

the embodiment and enhanced control that they offer, particularly in terms of

1. Some categories of remotely operated robots, such as teleoperated service robots, robotic toys, and

androids, typically do not include video. These are not considered to be RMC platforms in this essay.

2. RMC is sometimes referred to as embodied video-mediated communication (eVMC; Tsui, Desai, & Yanco,

2012). Alternate names for telepresence robots that can be found in the literature include personal roving

presence (ProP; Paulos & Canny, 1998), mobile remote presence (MRP; e.g., Takayama & Go, 2012), and

mobile robotic telepresence (MRP; Kristoffersson, Coradeschi, & Loutfi, 2013).

Emerging Trends in the Social and Behavioral Sciences.

Robert Scott and Marlis Buchmann (General Editors) with Stephen Kosslyn (Consulting Editor).

© 2016 John Wiley & Sons, Inc. ISBN 978-1-118-90077-2.

1

2

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

mobility, telepresence robots can provide a richer sense of “being there” than

online videoconferencing technologies such as Skype. They enable a user not

only to communicate at a distance but to be virtually “in two places at once.”

The term RMC was coined by analogy with “computer-mediated communication” (CMC), which refers to human–human communication mediated by

networked computers—especially the Internet—and other digital media.

In that RMC mediates human–human communication and supports social

as well as task-related interaction, it resembles textual modes of CMC such

as email, text messaging, and (micro)blogging; graphical avatar-mediated

communication in virtual worlds; and online audio and video chat. Unlike

most CMC applications, however, RMC is asymmetrical, in that one person

is telepresent via a robot (henceforth, the pilot) while others are physically

present (henceforth, the local interlocutors).3

Not much research on RMC has been carried out by communication scholars to date.4 One reason is that although telepresence robots have existed

since the late 1990s (Paulos & Canny, 1998), high-definition video transmission and remote navigation use a great deal of wireless bandwidth,5 and it

has only become feasible to deploy such robots in real-world contexts since

fiber optic technologies expanded Internet bandwidth in the mid-2000s. The

first commercial telepresence robots were mobile robotic platforms designed

for use by physicians in medical settings. In the past few years, the number

of commercially available telepresence robots has grown,6 and research and

development are accelerating apace.

A consequence of this growth is that RMC is becoming increasingly

prevalent and socially relevant. More people are using telepresence robots

and producing RMC in an expanding range of contexts: business, educational, medical, and social. Telepresence robots are also used in security

and high-risk operations such as surveillance, mining, and search and

rescue, where it would be tedious or unsafe to send humans in; however, as

communication is secondary in such uses (if it is relevant at all), they are not

considered further here.

I research and use RMC in my professional academic life. The discussion

that follows reflects my experiences piloting a variety of telepresence robots

3. It is possible for all parties to be telepresent through multiple robots, but unless the robots need to

act in or on the physical space, it requires fewer resources and less effort for multiple remote interlocutors

to interact in virtual space.

4. An exception is the work of Leila Takayama (Lee & Takayama, 2011; Rae, Takayama, & Mutlu, 2013;

Takayama & Go, 2012), who uses the term RMC in several of her publications.

5. Bandwidth refers to the data throughput capacity of any communication channel

(http://www.encyclopedia.com/topic/Bandwidth.aspx, retrieved December 11, 2015).

6. Some of the more affordable ($2000–$3000) telepresence robots produced in the United States are

the Double, the TeleMe, and the Beam+. More expensive models (ranging from $7000 to $70,000) include

the VGo, the BeamPro, and the iRobot Ava. Recently, cheaper models produced overseas have entered the

market, including the Chinese PadBot (listed at $950) and the Russian Synergy Swan (listed at $999). Prices

are as of February 2016.

Robot-Mediated Communication

3

(in addition to several that I own) as well as the literature on telepresence

robots and RMC. After briefly describing key technological properties

of telepresence robots, I summarize what research has found regarding

communication and social interaction through such robots, or what I

call RMC broadly construed. Data-driven analysis of the actual, situated,

and embodied communicative practices of interlocutors in telepresence

robot-mediated conversations, or RMC narrowly construed, is largely

lacking so far. Thus, I propose a framework with questions and methods to

guide future study of telepresence robot-mediated discourse and language

use. In the final section, I consider ways in which telepresence robots could

be reconceptualized and redesigned, and raise questions about the intended

and unintended consequences of RMC.

TECHNOLOGICAL PROPERTIES OF TELEPRESENCE ROBOTS

When people think of robots, they tend to think first of autonomous robots

such as R2-D2 and C-3PO in the Star Wars movies. Autonomous robots

depend on preprogrammed commands and artificial intelligence, and they

are limited in their ability to communicate compared to human beings. In

contrast, telepresence robotics is a form of robotic remote control by a human

operator that is used to facilitate geographically distributed communication.

Telepresence robot-mediated interaction is intended to simulate face-to-face

(f2f) communication, and its success (or failure) is typically evaluated in

comparison to f2f interaction. That said, the line between telepresence and

autonomous robots often blurs, as when autonomous features, such as

obstacle avoidance, are incorporated into human-piloted robots.

There are “child-sized” telepresence robots (such as the Telenoid, developed in Japan, which is intended to be held in the local user’s arms) and

smaller table-top devices (such as the Kubi and the MeBot). The focus of

this essay is on “adult-sized” telepresence robots, which are the most widely

deployed and the most studied to date. The following descriptions pertain

especially to the adult-sized telepresence robots that are currently commercially available in the United States (referred to for convenience simply as

“telepresence robots” or “robots” henceforth), as exemplified by the VGo,

the QB, the BeamPro, the Beam+, the Double, and the iRobot Ava (Figure 1).

These devices are shaped and constrained by a specific set of technological

properties:

Embodiment. A telepresence robot can be designed to look like a human

being, but most versions in use today are not. The “head” of the typical

telepresence robot is (or includes) a video monitor; some robots have

4

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

iRobot Ava

Double

Beam+

QB

VGo

BeamPro

Figure 1 Some commercial telepresence robots.

an iPad or Android tablet as their video monitor. The head and shoulders of the pilot are typically visible in the video screen. The robot’s

“body,” in contrast, is often little more than a vertical column mounted

on a wheeled base, as shown in Figure 1. Robotic arms, because they are

difficult to create and expensive to produce, usually are not included,

although some designs include pointing devices.7

Size. Especially if the intended use is in a work environment, the general

sense among designers is that the robot should be large enough to be

taken seriously, but at the same time, not so large as to be perceived as

intimidating or dangerous. That said, current commercial models range

widely from 11 to 186 pounds, with heights from 2.8 to 6 feet, corresponding roughly to the height of a seated or a (short) standing adult

human. Some robots allow the pilot to adjust the robot’s height while

in use.

Movement. The main movement of telepresence robots is rolling about

in physical space. Similar to audio- and video-conferencing, most

telepresence robots operate through a WiFi (wireless) network. The

pilot remotely controls the movement of the robot through a computer

interface that features various navigation controls and indicators; some

robots can also be controlled via touch screen or a joystick. Today’s

robots typically have speeds equivalent to a (slow) walking pace.

Some can automatically detect obstacles and edges (such as stairs) and

prevent the robot from rolling into them. Some, such as the iRobot

Ava, are equipped with automated point-to-point navigation. These

automated features let the pilot focus less on navigation and more on

communication.

7. The early PRoP robot, for example, included a two-degrees-of-freedom pointer “so that remote

users [could] point as well as make simple motion[s]” (Paulos & Canny, 1998).

Robot-Mediated Communication

5

Audio and Video. The pilot “sees” through the robot’s cameras and “hears”

through its microphones, like in video conferencing. According to

Neustaedter, Venolia, Procyk, and Hawkins (2016), the head camera on

the BeamPro, a high-end telepresence robot, provides the equivalent of

20/200 vision. Peripheral vision is also typically limited, and in robots

that use one head camera, depth perception is lacking. To partially

compensate for these limitations, some head cameras include a zoom

feature, and some pan and tilt. Some robots are also equipped with a

camera that provides views down to the base to aid in navigation. As

in videoconferencing, the pilot sees a small picture-in-picture image of

him- or herself in the control interface; however, auditory feedback is

often lacking.

Message Transmission. Telepresence robots support synchronous,

ephemeral, two-way, voice-based communication, similar to audio–

video conferencing and f2f communication. Some also let the pilot

leave text on the robot’s video screen, and my VGo converts text typed

by the pilot into speech by the robot, which can be useful as a backup

when audio transmission problems occur.

These properties have consequences for RMC, both broadly and narrowly

construed.

COMMUNICATION AND SOCIAL INTERACTION THROUGH

TELEPRESENCE ROBOTS

The primary aim of telepresence robots is to foster social interaction between

individuals, or RMC broadly construed. That aim is often thwarted in practice, however, by network problems that result in unsynchronized audio and

video streams or loss of network connectivity. The robot may bump into

things or stall in the middle of a hallway, and audio or video may break up or

be temporarily lost. Audio is more important than video in conveying a sense

of presence; it is also essential for verbal interaction. I have found through

experience that poor audio input quality in combination with limited vision

can make locating and identifying the source of voices in the local environment challenging. Laggy audio can also compromise fundamental aspects

of turn-taking and interruptions (O’Conaill, Whittaker, & Wilbur, 1993). As

Desai et al. (2011) incisively conclude, “audio issues [can] make it difficult to

have any conversation, let alone a natural conversation.”

One must look beyond the present technical limitations of telepresence

robots, however, to appreciate their communicative potential. When the

technology works like it is supposed to, RMC has been found to be more

casual and sociable than video conferencing. Telepresence robots that were

6

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

used in technology workplaces for a year or more were found to enhance

“impromptu work meetings” (especially to ask questions, exchange ideas,

and get answers), “being available,” “planned social meetings,” “planned

work meetings,” “seeking people,” and “greeting/socializing” (Lee &

Takayama, 2011). Tsui et al. (2012) found that the novelty effect of using a

telepresence robot wears off quickly, within 15 minutes. Sometimes, the

robot becomes effectively “invisible-in-use” (Takayama & Go, 2012), such

that the remote user and the local interlocutor(s) experience the subjective

illusion of talking f2f.8 Some pilots identify with the robots as themselves,

to the point that they feel that their personal space is violated when a

local user approaches too close or touches their robot. These findings lend

support to Lee and Takayama (2011)’s claim that RMC blurs “boundaries …

between person and machine, physical and virtual, and being here vs. being

elsewhere.”

At the same time, RMC can be socially awkward, and new norms of interaction must be negotiated as pilots and local interlocutors mutually adjust to

the technological properties of telepresence robots. For example, local interlocutors are often unsure how to deal with a stalled robot, and may assume

that the interaction has ended and walk away, rather than waiting a few

moments for the pilot to reestablish a network connection or moving the

robot into range of a WiFi hot spot. The robot may impede normal human

traffic flow in a building or block others’ view in meetings or at conferences

without the pilot being aware of it, owing to the robot’s limited range of

vision. The pilot may misgauge social distance due to a lack of depth perception and position the robot too close or too far away from an interlocutor;

may talk too loudly, owing to a lack of audio feedback; or may linger too long

after a conversation, owing to missed social cues (Lee & Takayama, 2011).

Unless they understand the robot’s technological limitations, local interlocutors may interpret these behaviors as socially inappropriate or rude on the

part of the pilot.

Locals may also ascribe social meaning to the robot’s size. Robot height

influenced local interlocutors’ perceptions of leadership effectiveness in a

study by Rae, Takayama, and Mutlu (2013), with robots that were taller

than locals sitting down perceived as more leader-like than robots that were

shorter than the seated locals.9 Kristofferson et al. (2013) found that “people

of different [height] preferred robots of different height and adjusted their

distance to them accordingly.” This may have stemmed from a desire to

8. Paradoxically, realistically humanoid (e.g., android-style) robots can distract and detract from the

interaction experience (Mutlu, Yamaoka, Kanda, Ishiguro, Hagita, 2009), possibly because they only

project the pilot’s voice and movements, and not his or her face. Video is useful in “provid[ing] subtle

information about the motions, actions, and changes at the remote location” (Paulos & Canny, 1998).

9. Girth also matters, it seems. Leila Takayama (personal communication) reports that the thinner

robots have less presence than the wider, more substantial ones.

Robot-Mediated Communication

7

look the pilot in the eye as much as possible, or to adjust for the perceived

or desired power distance between the pilot and the local interlocutors.

The embodied nature of the robot is also relevant to issues of identifiability

and anonymity. More than one person can pilot a robot (albeit not at the same

time), and one person can pilot multiple robots. When more than one person

uses the same robot or the same kind of robot, the anonymous appearance

of the robot can make the pilot difficult to identify, especially from behind.

Locals sometimes dress or decorate the robot, for example, with a hat, t-shirt,

or scarf, to associate it with particular pilots (Neustaedter et al., 2016). The

robot’s anonymous appearance may be advantageous, however, in circumstances where the remote participants wish to avoid drawing attention to

themselves.

Ambiguity may also arise about a moving robot’s intent, owing to gaze misalignment and a lack of gestural cues—does the pilot want to chat, or is he

or she just trying to pass by? (Neustaedter et al., 2016). As in video conferencing, the pilots’ cameras are often facing in a different direction than their

video image appears to be looking (Lee & Takayama, 2011), and thus the subtle cues that are normally exchanged via gaze f2f are not available in RMC.

The pilot’s gestures are also less visible. Some researchers have suggested

that because facial expressions and nonverbal gestures are not as salient,

telepresence-mediated interaction feels less “real” than f2f communication

(Mantei et al., 1991). My own experience piloting telepresence robots is that

I am “present” at the remote location but not as present as if I were in my

physical body. This too can have advantages: I have observed that locals’

behavior tends to be more unguarded around my robot than it would be if

I were physically present, and it is easier to observe others in social interactions when less focus is on me, as tends to occur once the novelty of the robot

avatar wears off. Indeed, some pilots move the robot from side to side just to

remind locals that they are there.10

Although lacking in the ability to produce human-like social cues, telepresence robots have other ways of signaling intention, such as flashing lights or

gesturing with a laser pointer, and these can develop conventionalized meanings. The robot’s mobility can be a form of body language for starting and

stopping conversations; for example, slight movements may indicate the end

of a conversation (Neustaedter et al., 2016). Simply turning the robot’s head

or body to face the intended addressee is often an effective way to engage.

In multiparty interactions, one study found that turning to look at a current

speaker resulted in more and longer conversational turns. At the same time,

“swiveling toward one [local] participant often meant that another [local]

participant was left looking at the edge of the display screen” (Sirkin et al.,

10. Leila Takayama, personal communication.

8

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

2011), and the time required to rotate the display introduced delay into the

conversation, disturbing its natural flow.

Related to movement, the robot’s lack of arms means that it requires

help to manipulate its surroundings (e.g., opening doors, pressing elevator

buttons, moving objects), and this must be negotiated between pilot and

locals. Because of this, some pilots report feeling like they are disabled

(Lee & Takayama, 2011).11 Locals may share this perception too. Takayama

and Go (2012) found that people have different metaphors for telepresence

robots, and their social expectations depend on those metaphors. Locals who

view the robot as a machine, purely a mediating technology, tend to have

lower social expectations of it; they are more likely to help the robot, while

feeling less constrained by f2f norms of politeness. For instance, they might

not consider it rude to put their feet up on the robot’s base while conversing

with it, or to mute the telepresence robot from the local side (Takayama

& Go, 2012). In contrast, people who think of the robot as a person and a

social actor tend to consider such behaviors impolite. Many subjects who

thought of the robot as a person in Takayama and Go’s study thought of it as

a disabled person. The implications of the disability metaphor for politeness

and helpful behavior have not been studied yet, however.

LANGUAGE AND DISCOURSE IN ROBOT-MEDIATED

COMMUNICATION

“The most important component of communicating through a telepresence

robot is the conversation itself” (Tsui et al., 2012), or RMC narrowly construed. Yet although language and discourse in CMC has been described

extensively,12 almost no study has been made of the linguistic choices that

interlocutors make in RMC—their patterns, their variations, their intended

meanings, or their pragmatic effects. Moreover, no research has been based

on close analysis of a corpus of actual RMC. Is language use more or less formal in RMC than in f2f? How do others refer to the robot—as “you,” “s/he,”

or “it”—and what factors condition variation in reference? How does the

limited mobility and range of visibility of pilots affect their ability to attract

attention, gain and hold the conversational floor, and time turn-taking appropriately? What is the social and hierarchical status of the pilots: Are they

taken less seriously when they are in positions of authority? Do they receive

politeness and deference the same as if they were physically present, and to

what extent does this vary by gender and culture—theirs and that of their

local interlocutors?

11. In contrast, some physically disabled users, who otherwise might not be able to move about a

remote site, experience robotic embodiment as empowering (Neustaedter et al., 2016).

12. For a recent overview, see Herring and Androutsopoulos (2015).

Robot-Mediated Communication

9

As a framework that could be used to guide exploration of these and related

questions in the pilot’s and the local interlocutors’ discourse, I identify five

categories of language use in RMC that could fruitfully be researched, organized from smallest to largest linguistic units and from least to most context

dependent, with sample phenomena of potential interest listed for each category. Each category could be addressed by existing linguistic methods of

analysis, modified to take into account the mediating properties of telepresence robots.13 (Italics indicate phenomena that have been touched upon in

the RMC literature.)

Structure. RMC- and context-specific conventions of language use; word

frequencies; formality; organization of speaking turns, exchanges, and

conversations; disfluencies; intonation and volume; gesture.

Meaning: Word Choice/Use. Discourse topics (What is the talk about?); interactive personal pronouns (e.g., “I”, “we”, “you”) and forms of reference

(“s/he”, “it”, “the robot”, etc.); vocabulary diversity and size; metaphor

use.

Meaning: Pragmatics. Speech acts (What are the speakers doing through

language?); determining intention; observations and violations of conversational maxims; politeness; topic initiation, development, and termination; deixis; presupposition, implicature, and so on.

Interaction Management. Attention-getting; greeting; leave-taking (termination of interaction); preferred and dispreferred responses; turn-taking;

back-channeling; pausing/silence/inactivity [verbal vs multimodal

(cf. Licoppe & Morel, 2012)]; conversational repair; gaze; orientation to

current speaker.

Social Phenomena. Stylistic differences according to gender, age, socioeconomic status, culture, role, experience with robots, and so on;

self-presentation and self-revelation; lying and deception; playful

behavior; giving and receiving support; accommodation; conflict and

conflict management; negotiation (e.g., around use of shared space);

power/leadership; influence; deference, and so on.

These categories are not discrete; a single phenomenon could be addressed

on more than one linguistic level. Rather, the categories are intended as different lenses through which RMC can be viewed. Each lens brings into view

different questions, methods, and theoretical perspectives.

Naturally occurring robot-mediated interactions constitute the most

authentic data for studying language use in RMC. There are privacy issues

13. The organization of these categories follows that for computer-mediated discourse analysis, a

paradigm developed for textual CMC (Herring, 2004).

10

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

associated with collecting such data, though, as it could be perceived as

mobile surveillance. Moreover, unlike asynchronous web communication,

RMC is not self-archiving; the researcher needs to devise methods of recording, transcription, and presentation for information not normally found in

CMC, such as movement and gaze direction. Nonetheless, close analysis of

language and discourse in RMC is an important and fruitful direction to

pursue in future research.

Experimental methods are also valuable—for example, for comparing

RMC, both narrowly and broadly construed, with other modes of communication. Such comparisons would spotlight different effects of robot

mediation: RMC compared with f2f communication would shed light

on the effects of the robot proxy; RMC versus video-mediated communication would illuminate the effects of ambulation; and RMC versus

avatar-mediated communication would highlight the differential effects of

physical and virtual environments, for instance.

Finally, surveys and interviews are useful for querying participants

directly about RMC. In addition to their linguistic and social perceptions,

local participants could be asked to what extent they felt that the pilot was

present in a given interaction, toward the larger goal of understanding what

circumstances contribute to creating the effect of “invisible-in-use” robotic

telepresence.

THE FUTURE OF TELEPRESENCE ROBOTICS AND RMC

Referring to autonomous robots, Nourbakhsh (2013, p. xv) recently wrote,

“[T]he ambitions of robotics are no longer limited to imitating [human

beings]. … We have invented a new species, part material and part digital,

that will eventually have superhuman qualities.” Similarly, telepresence

robots need not be limited by “human” metaphors. Present iterations

already have “superhuman” abilities, compared with what is possible in

f2f communication: They enable a person to be in two (or more) places

simultaneously, and they provide mobility across great distances to the

mobility impaired. The first telepresence robot, Eric Paulos’s ProP, could

also float through the air.

In theory, nearly any autonomous robot in use or development today could

become a telepresence device with the addition of two-way audio–video

communication. Communicating remotely with other people through

“giant, military robo-dogs”14 (Nourbakhsh, 2013) is neither necessary nor

desirable, but the human-pilotable flying robots known as drones could

make useful communication devices, in addition to being able to navigate

14. A reference to Boston Dynamics’ “Big Dog” military robot. See: http://www.bostondynamics.

com/robot_bigdog.html.

Robot-Mediated Communication

11

outdoors over uneven terrain. Other nonhuman robotic designs suggest

interesting possibilities for remote human interactions, as well, ranging from

telepresence robots in the form of normal-sized dogs (to keep company with

and comfort the ill and elderly) to robots with multiple, highly specialized

arms (for remote surgical procedures).

To enhance robot-enabled multimodal, multicontinental telepresence,

future robots could include built-in navigation and map-creation technology; automated speech translation across languages; augmented reality

technology that overlays the video with information about current or

anticipated interlocutors drawn from an Internet database; and sensors to

collect information about the remote environment, ranging from proxemic

information about when a person is trying to squeeze by to information

about interlocutors’ emotional states. The robots could change appearance

according to who is piloting them at the moment. For example, they could

include screens upon which holographic images are projected, so that a

moving, speaking, three-dimensional representation of the pilot’s physical

self is visible in the remote environment. Making the remote pilot’s identity

readily visible is one way to encourage more human–human interactions,

and making the remote pilot visible from all angles would make bystanders

more likely to enter into conversations (Takayama & Go, 2012). Pilots,

at their end, could wear virtual reality gear to simulate an experience of

immersion in the remote environment, and their body motions could be

tracked and mirrored in the remote robot. The robots themselves could be

flexible, pliable, and gentle to the touch.

Meanwhile, the telepresence literature is filled with recommendations

for ways to improve the current paradigm of “videoconferencing on

wheels.” More and better cameras would provide the pilots with better

situation awareness (Desai et al., 2011). Convex video displays could afford

a wider range of directing a pilot’s gaze while not “turning her back” on

some participants (Sirkin et al., 2011). Some current video conferencing

systems use audio location to work out who is speaking and then focus

cameras on that speaker (Yoshimi & Pingali, 2002); this could also be

done for robots. A screen with a camera embedded in the middle would

aid interlocutors in establishing eye contact (Kristoffersson et al., 2013).

Features to provide remote pilots with more feedback about how they

are presenting themselves—for example, providing mechanisms to help

monitor their volume levels, monitor their appearance, and communicate

nonverbally—could improve the user experience for both remote pilots and

locals (Lee & Takayama, 2011).

The robot’s base could have treads that would allow it to roll over curbs

and climb stairs (Paulos & Canny, 1998). Lasers could assist navigation when

passing through doorways and while driving down hallways; for example,

12

EMERGING TRENDS IN THE SOCIAL AND BEHAVIORAL SCIENCES

if the robot drives at an angle toward a wall, the robot could autonomously

correct its direction (Desai et al., 2011). Autonomous navigation is desirable in

general for safety reasons, for ease of use, and to reduce social awkwardness

associated with bumping into walls and other objects. In studies by Desai

et al. (2011), a “follow person” behavior and a “go to destination” mode were

rated as potentially quite useful. However, automation raises issues of ethics

and legal liability. As Takayama and Go (2012) ask, “If a semi-autonomously

navigating MRP (mobile remote presence) system bumps into a person or

damages valuable furniture, who is to blame?” Adjustable autonomy would

allow the pilot to select from a range of autonomous behaviors or levels

according to circumstances.